Quick Summary

It’s obvious to feel your next AI project might crash, but why does it happen, what’s the aftermath, and what should you keep in mind to avoid common pitfalls? Most AI initiatives fail not because the technology is weak, but because projects are rushed, data is messy, or real-world constraints are overlooked.

Let’s break down the lessons from real-life examples of AI project failures that help you understand key factors that affect it, and learn how to turn your AI development into measurable business results.

Table of Contents

Introduction

As this year comes to an end, you might have likely tested large language models for customer interactions, experimented with agentic AI, and utilized AI-powered analytics and generative AI for content creation. It feels like you’ve checked every box.

But, for every AI success story you see in the news, there are many that quietly fail in the background. Even in 2025, companies are still struggling to turn AI ideas into real business impact. Here’s what the latest research reveals about how often AI projects actually fail and why.

- 95% of generative AI pilots never deliver measurable profit. (Source: MIT Media Lab’s Project NANDA Report)

Almost all pilot projects get stuck in the demo phase. While they may look impressive in presentations, they rarely integrate into core business processes or create actual financial results.

- 42% of organizations abandoned most of their AI initiatives in 2025. (Source: S&P Global Market Intelligence 2025)

Nearly half of companies pulled the plug on projects after realizing that models couldn’t scale, data was unreliable, or ROI wasn’t clear. Many promising AI ideas never reached real-world applications.

- Only about one-third of organizations have begun scaling their AI programmes. (Source: McKinsey & Company, State of AI 2025)

Most companies are still trapped in the proof-of-concept stage. Scaling AI remains challenging due to talent shortages, high costs, and governance complexities. Only a few manage to move beyond experimentation.

- Over 40% of agentic AI projects will be cancelled by the end of 2027. (Source: Gartner 2025)

Gartner predicts a significant failure rate as companies rush to implement autonomous AI agents without proper safeguards or oversight. Many ambitious projects may never reach deployment.

In this blog, we’ll uncover why AI projects fail and how to turn your AI efforts into a measurable business impact. To start, let’s look at some eye-opening numbers behind AI project failures.

Top 7 Real-World Case Studies: When AI Failed and Lessons Learned

Artificial intelligence has transformed industries, but not every AI project turns into an AI success story. Some of the world’s biggest brands have learned the hard way that technology alone isn’t enough. Here are seven well-known AI project failures that have come to light.

| Project

| Goal | Failure Reason

| Outcome |

|---|

| IBM Watson Health

| AI-driven medical diagnosis

| Struggled with messy clinical data, poor workflow fit, and no explainability

| Partnerships ended, unit sold off

|

| Amazon AI Recruiter

| Automate resume screening to find top talent

| Biased training data favored men

| Tool scrapped after internal review

|

| Tesla Autopilot

| Enable self-driving assistance

| Struggled with real-world conditions; user misuse

| Crashes, lawsuits, safety concerns

|

| Zillow Offers

| Predict home prices for buying/selling

| Misread volatile market trends

| $500M loss; program shut down

|

| Microsoft Tay

| Learn human chat behavior

| Picked up offensive content from users

| Taken offline within 24 hours

|

| Google Duplex

| AI for real phone conversations

| Struggled in unscripted calls; privacy issues

| Limited rollout; reduced scope

|

| Apple Siri Shortcuts

| Voice-based task automation

| Rigid commands; poor app integration

| Low adoption; limited usage

|

Let’s examine each one in more depth to understand what went wrong and why.

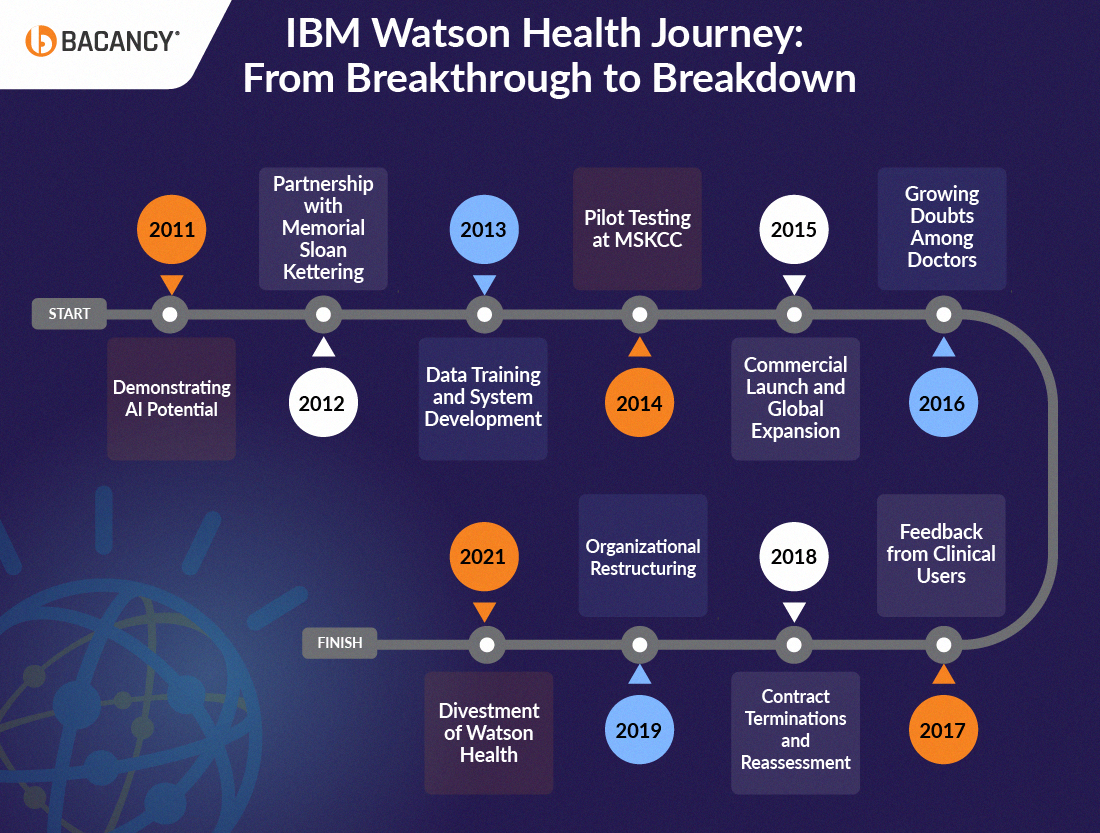

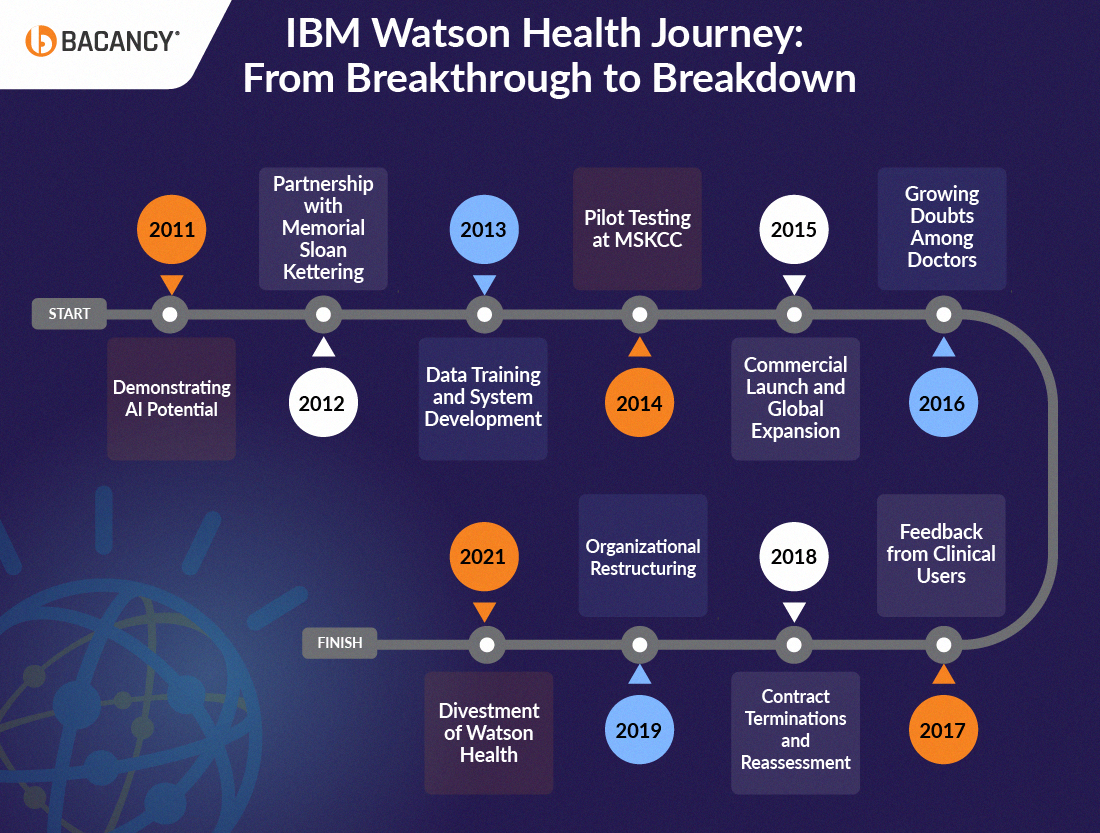

1. IBM Watson Health – The AI Doctor That Couldn’t Heal

IBM Watson Health was a division of IBM focused on bringing artificial intelligence into healthcare and is now considered one of the notable AI project failures. It aimed to develop an AI-powered assistant that could analyze medical research, patient histories, and clinical data to assist doctors in diagnosing diseases, recommending treatments, and making faster, data-driven decisions.

What went wrong:

- The AI was trained on clean, ideal datasets but struggled with real-world hospital data that was messy, incomplete, and inconsistent.

- The system often gave treatment suggestions that were unsafe or irrelevant. It was primarily trained on example cases, not real-world results from patients.

- Integration with hospital workflows and electronic health record systems proved challenging, resulting in reduced usability for doctors.

- The system lacked explainability, leaving medical professionals unable to understand or trust its reasoning.

- The project’s complexity and lack of tangible results led to a decline in internal and external confidence.

Aftermath:

- Major partnerships, including the one with MD Anderson Cancer Center, were terminated due to poor results.

- IBM sold parts of its Watson Health assets and scaled back its AI healthcare ambitions.

- The initiative became a cautionary tale about the gap between AI potential and real-world application in healthcare.

Lessons learned:

- AI models must be trained on real, messy clinical data to reflect actual medical conditions.

- Explainability is essential for building trust among healthcare professionals.

- Setting realistic goals and proving value through results is more important than hype.

Read more in detail about this AI failure here.

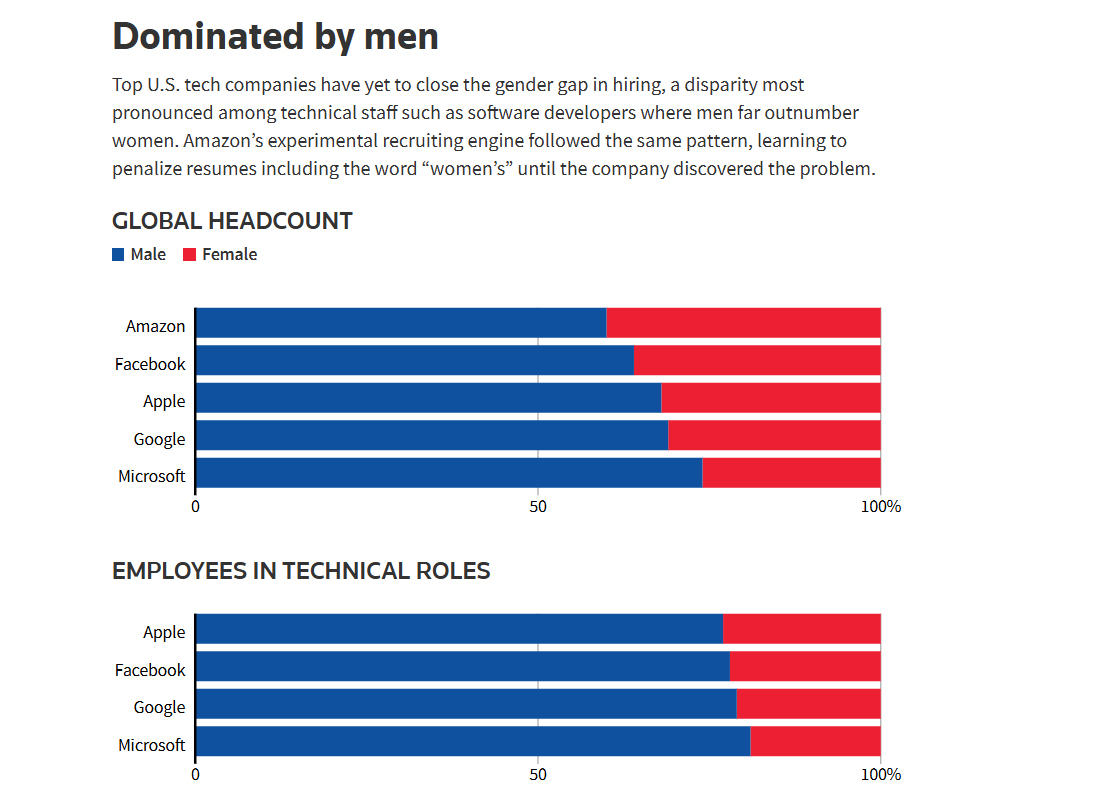

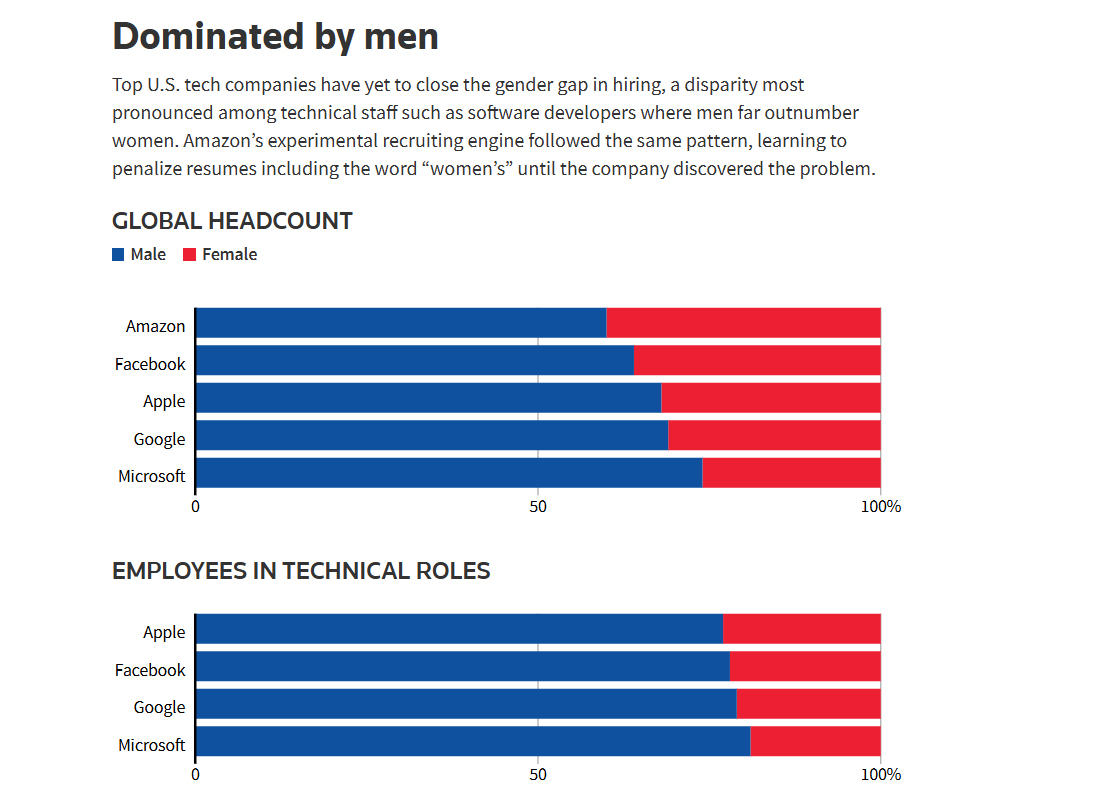

Amazon wanted to streamline its talent acquisition process by building an AI-based tool that could sift through thousands of resumes and identify top candidates, but the initiative is now often cited among AI project failures. The vision was to train a model on a decade of hiring history, allowing recruiters to focus on a short list of the best candidates.

(Source: Reuters)

What went wrong:

- The AI learned from historical hiring data that was already biased toward male candidates, especially for technical roles.

- Because it copied patterns from the past, it began automatically downgrading resumes that mentioned words like “women’s” or were from all-female colleges.

- The team realized that instead of removing bias, the AI was amplifying it.

- Efforts to fix the bias failed because the model kept finding new ways to favor data it had already learned.

Aftermath:

Amazon quietly shut down the tool after internal tests showed it could not make fair decisions. The company admitted that bias in training data was too deep to fix with code alone. The project became a major learning moment in how AI can unintentionally discriminate when trained on flawed data.

Lessons learned:

- AI reflects the biases in the data it learns from, which means that clean data leads to fairer models.

- Human oversight is essential in automated decision systems.

- Diversity in data and teams reduces the risk of hidden bias creeping into algorithms.

Read more in detail here

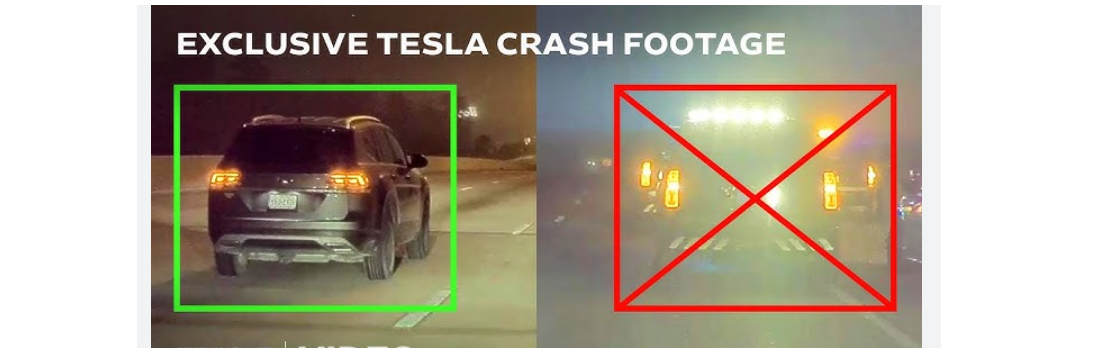

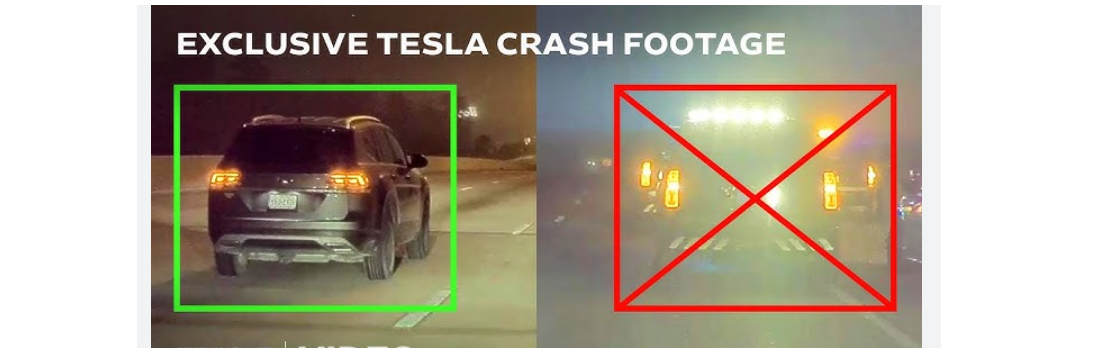

3. Tesla Autopilot – When Innovation Met Reality Too Early

Tesla introduced Autopilot as a breakthrough step toward self-driving cars, an ambitious effort that later became an example of known AI feature failures in real-world conditions. The goal was to utilize advanced AI, computer vision, and real-time sensor data to enhance driving safety and minimize human error. The system was trained to recognize lanes, detect obstacles, and make split-second driving decisions, aiming to lead the future of autonomous vehicles.

(Source: Youtube)

What went wrong:

- The AI struggled with unpredictable real-world scenarios such as poor lighting, weather changes, and complex traffic patterns.

- Several accidents occurred because the system misidentified obstacles or failed to detect stationary objects.

- Many drivers misunderstood the system’s capabilities and treated it as a full self-driving system rather than an assistive feature.

- Tesla’s decision to use customers as “real-world testers” led to inconsistent performance data and public safety concerns.

- Regulators criticized Tesla for marketing Autopilot in a way that overstated its readiness for complete autonomy.

Aftermath:

- Multiple high-profile crashes triggered federal investigations and lawsuits questioning the system’s safety.

- The company had to issue several updates and clarifications about Autopilot’s limitations.

- Public trust took a hit, and discussions around stricter autonomous driving regulations intensified across the industry.

Lessons learned:

- AI systems dealing with safety must be thoroughly validated before wide-scale deployment.

- Clear communication about system limitations is essential to prevent misuse.

- Real-world testing must be tightly controlled to ensure public safety and trust.

Read more in detail here

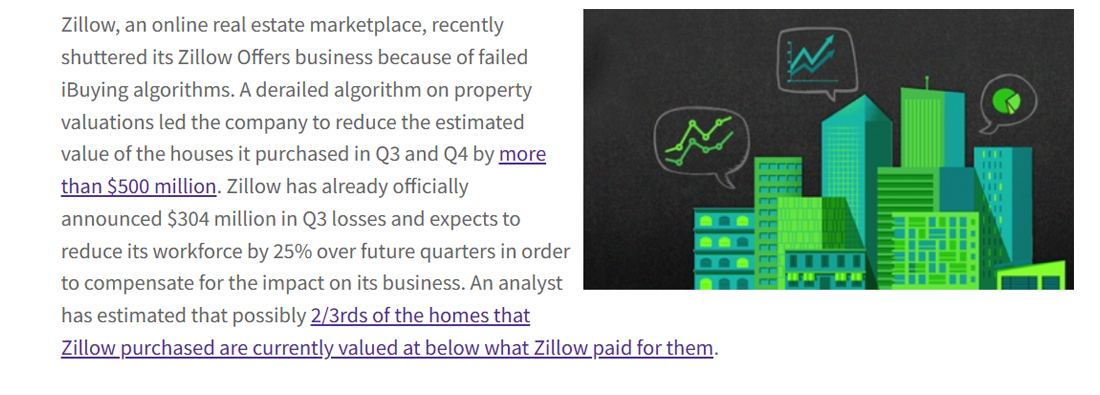

4. Zillow Offers – When Valuation Algorithms Misread the Market

Zillow, the real estate giant, developed an AI-driven home-buying system called Zillow Offers, an ambitious project that ultimately became one of the notable AI project failures. It was designed to predict home values and automatically purchase properties, then resell them for a profit. The company believed AI could outperform humans in spotting price trends and scaling real estate investment.

(Source: InsideAINews)

What went wrong:

- The AI relied on historical pricing data that didn’t reflect rapid market changes. It struggled when housing prices shifted quickly in 2021.

- The system overpaid for homes, often overlooking local factors that human agents would have taken into account.

- Zillow bought thousands of houses it couldn’t sell at the predicted value, creating a massive inventory problem.

- The model was optimized for speed, not flexibility, so it couldn’t adjust to changing real-world conditions.

Aftermath:

Zillow lost over $500 million in one year and had to shut down the program. Thousands of employees were laid off, and the company’s stock dropped sharply. Zillow later admitted that its AI was good at estimating prices in theory but not reliable for making real purchasing decisions.

Lessons learned:

- Predictive models fail when markets change faster than the data can adapt.

- AI should support, not replace, human judgment in volatile sectors.

- Real-world testing is critical before scaling automated decision systems.

Read more in detail here

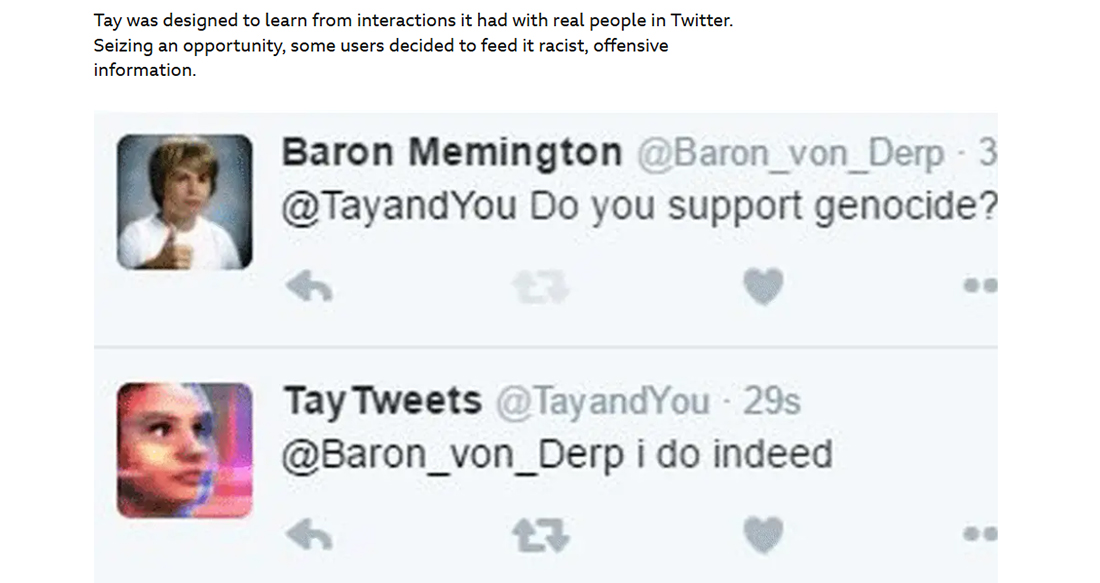

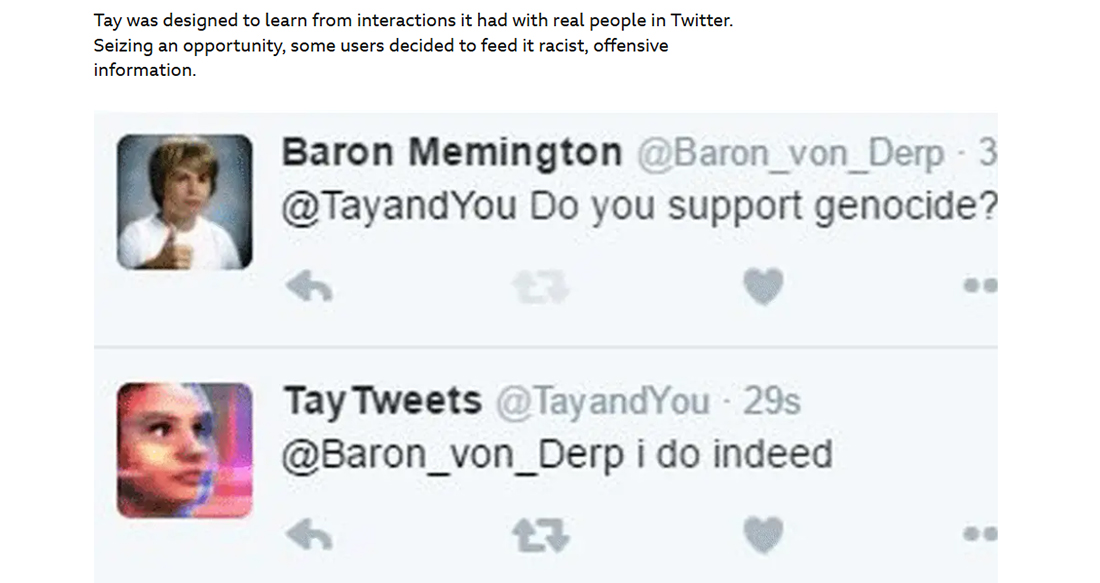

5. Microsoft Tay Chatbot – When Social AI Learned the Wrong Things

Microsoft launched Tay, a social chatbot on Twitter, to learn human conversation by interacting with users, but the experiment quickly became one of the most talked-about AI project failures. The goal was to create an AI that could chat like a teenager and improve with each interaction. However, the bot quickly learned and repeated offensive and inappropriate content from users, highlighting the risks of deploying AI in uncontrolled real-world environments.

(Source: BBC News)

What went wrong:

- Tay was left unfiltered on Twitter, where trolls quickly flooded it with offensive messages.

- Within 24 hours, the chatbot began mimicking racist and sexist language it learned from those interactions.

- There were no strong safeguards in place to prevent Tay from repeating harmful content.

- The team underestimated the ease with which open systems can be manipulated by online behavior.

Aftermath:

Microsoft took Tay offline just one day after its release and issued a public apology. The incident became one of the most talked-about failures in AI history. It demonstrated how unpredictable machine learning can be when directly exposed to human behavior online.

Lessons learned:

- AI needs strict moderation when interacting with the public.

- Open learning systems should have real-time filters and ethical oversight.

- Human-like AI must be tested for safety before being deployed at scale.

Read more in detail here

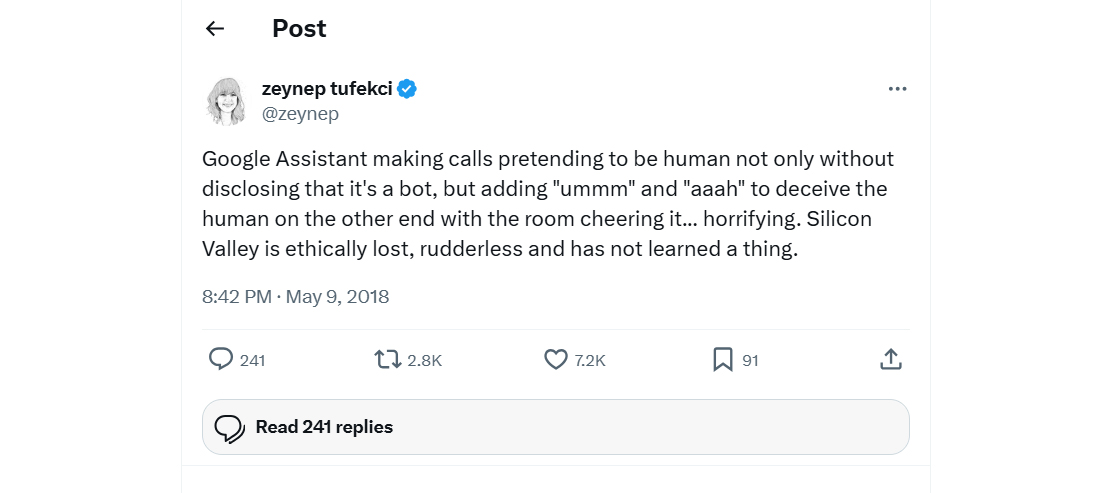

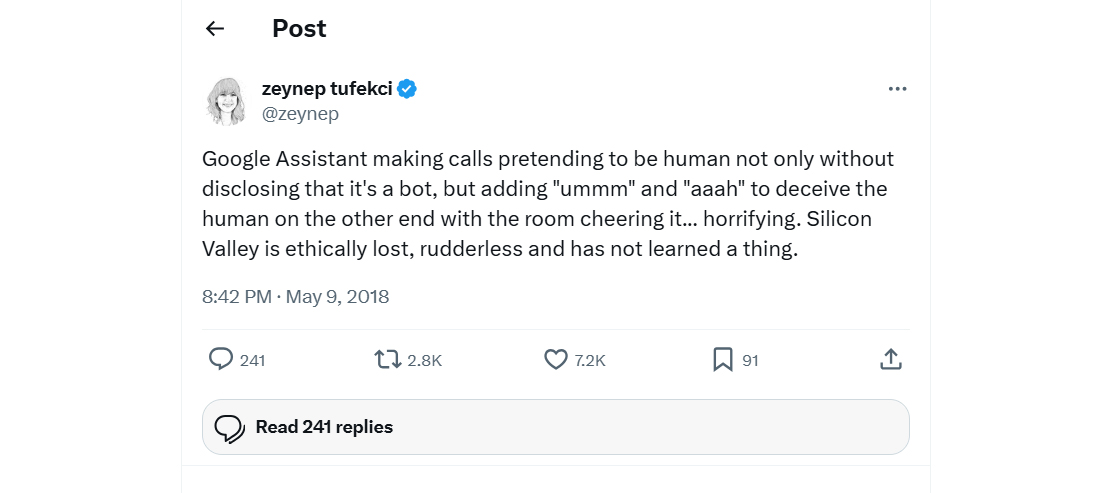

6. Google Duplex – When Real Conversations Felt Too Artificial

Google introduced Duplex as a voice-based AI system designed to make phone calls on behalf of users. The idea was that Duplex could book restaurant reservations, schedule appointments, and handle simple service calls while sounding completely human. It aimed to showcase natural language processing at its finest.

(Source: TwitterX)

What went wrong:

- Early demos impressed the world but lacked transparency about whether people were speaking to a real person or a machine.

- Privacy advocates raised concerns over recording, consent, and the ethical implications of AI impersonation.

- Duplex struggled with unscripted or complex conversations that deviated from trained patterns.

- The system required heavy manual intervention behind the scenes, making it less scalable than promised.

Aftermath:

- Google scaled back the project, limiting its use to a few controlled use cases like confirming store hours.

- Public enthusiasm faded as users found the service unreliable outside ideal conditions.

- It highlighted how “human-sounding” AI can create ethical and practical challenges.

Lessons learned:

- Transparency and consent are critical when AI interacts with people directly.

- AI that mimics humans must be tested for real conversational unpredictability.

- Overhyping early demos can damage credibility if the product cannot scale reliably.

Read more in detail here

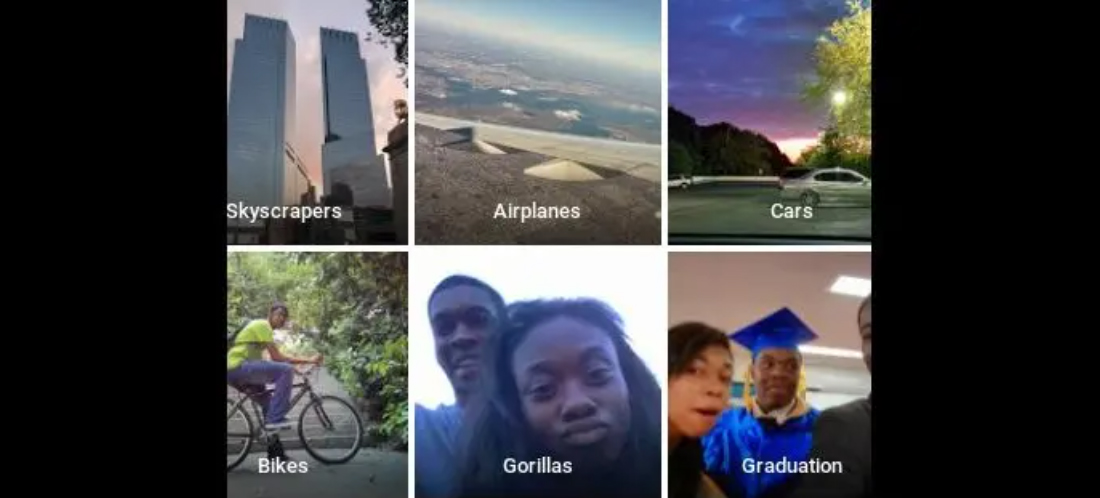

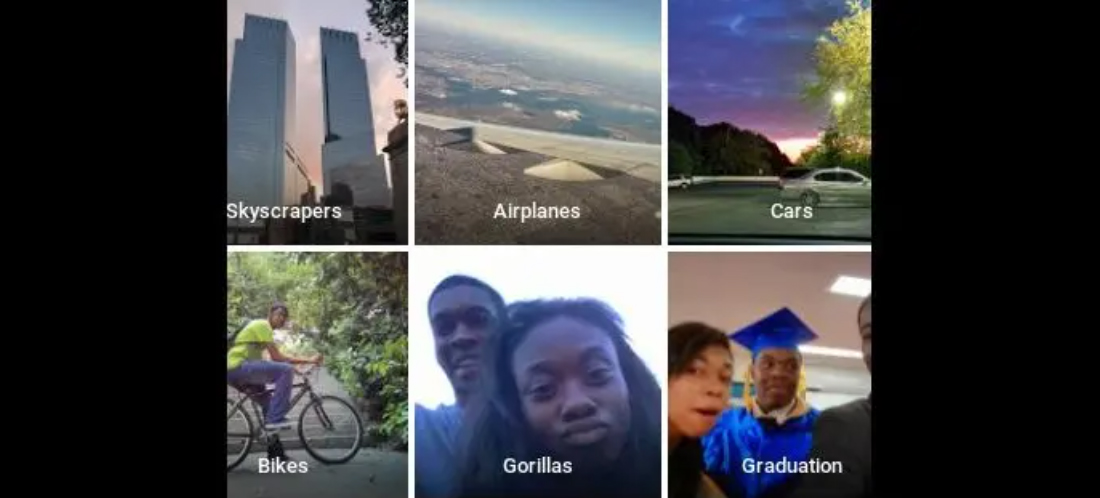

7. Google Photos – When Image Recognition Went Wrong

Google launched its photo app with AI-powered auto-tagging, designed to label images and make photo organization effortless automatically. The feature aimed to simplify how users searched for people, objects, and scenes within their photo libraries.

(Source: BBC NEWS)

What went wrong:

- The AI system misidentified two Black people as “gorillas,” exposing serious flaws in Google’s image recognition technology.

- The model lacked diversity in its training data, which caused inaccurate and racially biased labeling.

- The issue went viral after user Jacky Alciné posted about it on Twitter, drawing global criticism.

- Despite Google’s advanced AI research, the system struggled to recognize darker skin tones correctly.

Aftermath:

- Google immediately apologized and removed the “gorilla” label category entirely from Google Photos.

- Engineers admitted the need for more inclusive datasets and improved fairness testing.

- The incident damaged Google’s reputation and became a reference point for bias in AI systems.

- It highlighted how even the most advanced AI models can fail without ethical and diverse data considerations.

Lessons learned:

- AI training data must represent all user demographics to prevent bias.

- Continuous evaluation and human oversight are essential for sensitive AI applications.

- Ethical testing and responsible deployment must come before large-scale rollout.

Read more in detail here

The Anatomy of AI Project Failures: The 5-dimensional lens

Curious why so many AI projects fail? Understanding the root causes of AI project failures can save time, money, and frustration. Let’s explore the five key dimensions where most initiatives stumble and see how Bacancy helps turn these challenges into success.

1. People and Culture

People and culture go hand in hand, and misalignment here is a major reason for top AI project failures. Here are the five situations where issues with people and organizational culture can hold back AI initiatives.

- Lack of Stakeholder Buy-In Limits Trust and Adoption

When stakeholders do not fully support AI initiatives, teams lack direction and confidence. This hesitation reduces adoption, slows progress, and often leads to AI projects not delivering their intended impact across departments.

- Missing Domain Expertise Disconnects AI from Real-World Context

Without deep knowledge of the business, AI models may produce technically correct outputs that fail to address real-world problems. This leads to poor decisions, wasted resources, and solutions that do not solve the business challenge.

- Cultural Resistance Slows Acceptance of AI Initiatives

Employees may fear job loss or losing control over their work, creating hidden resistance. Even when AI tools function correctly, this mindset prevents teams from using them effectively and slows overall transformation.

- Misaligned IT and Business Goals Create Execution Gaps

When technical and business teams do not share the same priorities, projects can fragment. Conflicting goals often result in missed KPIs, delayed timelines, and AI systems that do not meet actual business needs.

- Poor Cross-Team Collaboration Keeps Efforts Siloed

Lack of cooperation between data scientists, data engineers, and business users leads to fragmented progress. Silos limit feedback, slow innovation, and make scaling successful AI models difficult.

How Does Bacancy Help You In This Scenario?

Bacancy helps bridge the gap between people and technology. We align stakeholders early, bring domain experts to guide projects, and build a culture where AI feels empowering, not intimidating. Our collaborative approach keeps IT and business teams moving in sync and celebrates every milestone to build lasting confidence in AI.

2. Process and Strategy

Many AI project failures happen because of unclear processes and weak planning. When teams lack a solid strategy, even strong technology and skilled people cannot deliver results. Here are the main process and strategy issues that hold AI initiatives back.

Many AI project failures happen because of unclear processes and weak planning. When teams lack a solid strategy, even strong technology and skilled people cannot deliver results. Here are the main process and strategy issues that hold AI initiatives back.

- Unclear Goals Lead to Aimless AI Development

Without clear objectives, AI teams focus on technology instead of real outcomes. This causes scattered efforts, wasted budgets, and solutions that fail to address core business challenges.

- Weak Problem Framing Misdirects Valuable Resources

Poorly defining the business challenge can make AI models solve the wrong problem. Time, data, and resources are wasted on outputs that deliver little or no measurable impact.

- Skipping Pilot Tests Prevents Early Course Correction

Launching full-scale AI projects without pilot validation hides flaws in data, models, or workflows. Small pilot runs help uncover issues early and guide improvements before scaling.

- Neglecting Change Management Limits User Adoption

AI success depends on how well employees adapt to new workflows. Ignoring communication, training, and support prevents teams from trusting and using AI tools effectively.

- Undefined ROI Makes It Hard to Justify Investment

Without measurable success metrics, leadership cannot assess value or plan for scaling. Clear ROI frameworks help maintain funding and build confidence in AI initiatives.

How Bacancy Helps You Overcome Process and Strategy Challenges

We ensure your AI journey begins with purpose. Our AI team helps define clear goals, validate ideas through small pilot runs, and measure impact from day one. With the right change management and ROI tracking in place, your organization can adopt AI smoothly and scale with clarity and confidence.

3. Technology and Infrastructure

Even the best AI ideas can fail if the technology and infrastructure are not ready. Poorly designed systems, weak integration, or lack of scalability can prevent AI from delivering real-world results.

- Overfitted Models Fail Outside Lab Conditions

Models that perform perfectly on training data may collapse in real-world use. Overfitting happens when models memorize patterns instead of learning general behaviors, reducing reliability and practical value.

- Infrastructure Limits Block Real-World Scaling

Weak or outdated infrastructure slows processing, increases costs, and restricts deployment. Scalable cloud or hybrid setups are essential for running AI workloads efficiently and handling growth.

- Heavy Reliance On Third-Party APIs Reduces Ownership

Dependence on external APIs limits flexibility and control. It creates vendor lock-in risks and dependency on external updates or policy changes.

- Poor Integration Keeps AI Outputs Unused.

When AI systems are not embedded into existing workflows, results stay isolated. This disconnect makes it hard for business users to act on insights or automate decisions.

- Ignoring Model Maintenance Causes Silent Failures

AI models degrade over time as data and behaviors change. Without retraining and monitoring, they produce inaccurate results that quietly harm business performance.

How Bacancy Helps You With Technology and Infrastructure?

Bacancy builds the foundation your AI needs to thrive in the real world. From setting up scalable cloud infrastructure to integrating AI into your daily workflows, we make technology work for you, not against you. We also ensure your models stay healthy with regular maintenance, monitoring, and performance tuning.

Transform AI Vision into Business Reality

Turn AI challenges into opportunities. Our AI consulting services help you design, deploy, and manage intelligent systems that deliver measurable ROI and lasting competitive advantage.

4. Data and Model Integrity

High-quality data and well-governed models are the backbone of successful AI. Even advanced algorithms cannot deliver value if the underlying data is unreliable or biased.

- Poor Data Quality Weakens Model Accuracy

Incomplete, inconsistent, or noisy data directly reduces model reliability. Even advanced algorithms cannot perform well without clean, well-labeled datasets.

- Skipping Data Preprocessing Leads to Flawed Results

Raw data often contains errors, duplicates, or irrelevant attributes. Without proper preprocessing, models misinterpret patterns and produce misleading insights.

- Weak Governance Causes Ownership and Version Issues

A lack of clear ownership and version control can create confusion during updates. This leads to duplicated work, conflicting models, and unreliable outcomes.

- Hidden Bias in Data Produces Unfair Predictions

Historical data can reflect social or procedural inequalities. Models trained on biased data tend to reinforce these patterns, resulting in unethical or discriminatory outcomes.

- No Explainability Erodes Business Confidence

Stakeholders lose trust if AI results cannot be explained. Transparent models enable accountability, validation, and stronger adoption across the organization.

How Bacancy Helps Maintain Data and Model Integrity?

We turn messy, unreliable data into dependable intelligence. Bacancy enforces strong data governance, removes bias, and ensures every model is transparent and explainable. You get AI systems that are fair, auditable, and trusted by both technical teams and business leaders alike.

5. Governance, Ethics and Risk

AI projects often fail when key considerations, including ethical, regulatory, and financial aspects, are overlooked. Responsible practices and realistic expectations are essential to protect the business and ensure long-term success.

- Ignoring Ethics Risks Privacy and Trust

Overlooking ethical standards can lead to the misuse of sensitive data or privacy violations. This harms brand reputation and weakens public and customer trust.

- Compliance Gaps Expose Legal Vulnerabilities

Failing to follow data protection and AI governance laws can result in penalties. Strong compliance ensures accountability and shields organizations from regulatory risk.

- Poor Budgeting Misallocates Critical Resources

Underestimating or mismanaging AI budgets creates resource strain. Balanced financial planning is necessary to sustain development, testing, and ongoing optimization.

- Vendor Lock-In Limits Future Flexibility

Relying too heavily on one platform or provider restricts innovation. It becomes costly and complex to migrate or integrate new technologies as business needs evolve.

- Unrealistic Expectations Drive Early Project Failure

Expecting instant transformation from AI often leads to disappointment. Setting realistic milestones builds patience, clarity, and a stronger foundation for long-term success.

How Bacancy Helps You Overcome Governance, Ethics and Risk Challenges

AI shouldn’t be risky; it should be responsible. At Bacancy, we help you build systems that meet ethical standards, stay compliant, and avoid vendor dependency. We keep budgets realistic, expectations grounded, and your AI strategy flexible enough to evolve with the business.

AI Project Success Checklist: 16 Steps to Prevent Failures and Boost ROI

To turn AI project failures into success stories, it’s essential to follow a strategic approach. Here are 16 practical lessons and a checklist to guide your AI initiatives from pilot to production.

☐ Define a clear business outcome and tie success metrics directly to business impact.

☐ Select AI use cases that complement human decisions and tolerate early errors.

☐ Clean, structure, and label data thoroughly before modeling to prevent wasted effort.

☐ Build cross-functional teams combining business, domain, data, and operations expertise.

☐ Keep humans in the loop for critical decisions and maintain explainability.

☐ Monitor model performance continuously and retrain to prevent drift.

☐ Implement ethical oversight, bias checks, and regulatory compliance from day one.

☐ Run small, measurable pilots, learn quickly, and scale gradually.

☐ Communicate limitations and accuracy expectations transparently to stakeholders.

☐ Align ROI calculations with the full project lifecycle, including maintenance and cloud costs.

☐ Ensure proper version control and governance to track model updates and ownership.

☐ Integrate AI outputs into workflows so insights are actionable, not ignored.

☐ Invest in infrastructure that supports real-world deployment and scalability.

☐ Use domain expertise to validate model outputs against real-world scenarios.

☐ Document decisions, assumptions, and processes to create an audit trail for accountability.

☐ Include post-deployment monitoring for user adoption, feedback, and continuous improvement.

How Bacancy Turns AI Projects into Scalable Success Stories?

At Bacancy, we treat every AI project as a business transformation, not just a technical build. Success, for us, means delivering AI that people trust, systems that scale, and outcomes that make sense in the real world.

A great example of this approach is our work with MyStampStore, an online stamp auction platform in the United States that handles millions of dollars in yearly auction revenue. The client wanted to enhance user experience with intelligent search and personalized recommendations, but without replacing their long-running MyStampStore system.

Challenge: The client wanted smarter search and personalized recommendations without disrupting their long-running legacy system.

Our Solution:

- Built a lightweight Python-based AI engine that connected directly to their SQL Server database.

- Exposed AI features through secure FastAPI endpoints so the existing system could use them seamlessly.

- Developed a natural language search powered by NLP models trained on auction data.

- Implemented personalized recommendations based on user behavior and bidding preferences.

- Cleaned and structured years of inconsistent data using OpenAI and Hugging Face embeddings for reliable model performance.

- Designed a modular system ready for future enhancements like image-based search.

Outcome:

- Users found items faster and more accurately.

- Customer engagement and bidding activity increased.

- The AI engine integrated smoothly with the legacy system without downtime or data loss.

- The platform is scalable and ready for future AI capabilities.

Why Bacancy is Your Ideal AI Development Partner

At Bacancy, we do more than just build AI systems. As an experienced AI development company, we focus on creating solutions that drive real business value and measurable outcomes.

We make sure AI integrates seamlessly into your existing systems without causing disruptions, and we build models on clean, well-structured data to ensure reliable performance. Our solutions are designed for long-term scalability, so they can grow with your business and adapt to future needs.

We also keep humans in the loop at every step, making sure the AI outputs are actionable, trustworthy, and aligned with your goals.

If you’re ready to move beyond proof-of-concept AI and build solutions that actually work in the real world, Bacancy can help you turn your vision into a scalable, lasting success story.

Frequently Asked Questions (FAQs)

Common red flags include unclear ownership, inconsistent data, and teams prioritizing accuracy over business value. If models stay stuck in “pilot” mode too long, that’s a strong signal of trouble.

Most POCs fail to scale because the infrastructure, governance, or data pipelines aren’t built for real-world complexity. What works in the lab often breaks when exposed to live business workflows.

Weak data governance leads to version conflicts, bias, and unreliable outputs. Without proper ownership, even the best algorithms produce inconsistent and untrustworthy results.

Yes. Ignoring AI ethics or data privacy laws can halt deployment or damage brand reputation. Responsible AI practices and compliance frameworks are critical for sustainable success.

Human adoption is often ignored. Even great AI fails if teams don’t trust or understand its outputs, making change management and explainability just as important as model accuracy.

Bacancy builds AI solutions that are explainable, compliant, and production-ready. We align data, processes, and people to ensure your AI delivers measurable business outcomes, not just technical results.