Quick Summary

AWS Bedrock pricing in 2026 ranges from roughly $100/month for lightweight, low-volume workloads to $5,000+/month once Bedrock Agents, Knowledge Bases, and high-throughput inference enter the picture. In this blog, we will walk you through every layer of AWS Bedrock costs, from on-demand token rates and vector storage minimums to agent token amplification and adjacent AWS charges.

Table of Contents

Introduction

Several CTOs end up spending 1.5-2x their initial Amazon Bedrock pricing estimates. The surprising part? The overrun doesn’t come from hidden fees; it comes from AWS Bedrock costs that are difficult to calculate upfront.

That’s the trap with AWS Bedrock pricing. The official Amazon Bedrock pricing page shows clean per-token numbers, but the actual bill tells a very different story.

The bill isn’t just one line item. On-demand inference, provisioned throughput, batch processing, and add-ons like Knowledge Bases and Guardrails all stack up independently, and most teams only connect the dots after the fact.

This guide walks you through how AWS Bedrock pricing actually works in real-world scenarios, what silently inflates your AWS Bedrock cost, and how to take control before it becomes a problem worth explaining to your finance team.

How Amazon Bedrock Pricing Works in 2026?

Amazon Bedrock charges you primarily for one factor: inference. The Amazon Bedrock cost structure is built on tokens processed by foundation models, with input tokens (your prompt, system instructions, and retrieved context) priced separately from output tokens (the model’s response).

Across nearly every model on the platform, output tokens cost three to five times more than input tokens. For instance, Claude Sonnet 4.5 runs $3.00 per million input tokens versus $15.00 per million output tokens, a 5x asymmetry that often matters more than the headline rate.

Understanding AWS Bedrock Token Pricing

A token is roughly 4 characters or three-quarters of a word. AWS Bedrock token pricing is quoted per million tokens for text models, per image for image models, and per minute for audio and video models.

Bedrock offers four pricing models, each with different economics:

- On-Demand for variable workloads with no commitment

- Batch Inference at 50% off for asynchronous bulk jobs

- Provisioned Throughput for reserved hourly capacity at high volume

- Cross-Region Inference for routing requests across regions without surcharge

On the free tier: Bedrock has no permanent free tier. Inference is pay-as-you-go from the first API call. New AWS accounts created after July 15, 2025, receive $200 in AWS credits ($100 on signup, $100 for completing guided activities) usable across 200+ AWS services, including Bedrock, expiring after six months. Treat this as an evaluation budget and not a long-term cost lever.

AWS Bedrock Pricing Models: 4 Tiers Explained

Picking the right tier inside the AWS Bedrock pricing models is the single highest-leverage decision in your AWS Bedrock cost structure. Here is how each works and when each wins.

1. On-Demand (Standard)

On-demand is the default pricing in the AWS Bedrock cost structure. You pay per token at the published rate with no capacity commitment and no minimum. Bedrock offers two service tier variants under the On-Demand cost: a Priority tier (roughly 75% over the Standard tier) for guaranteed performance, and a Flex tier (roughly 50% discount to the Standard tier) for higher-latency, best-effort delivery.

Best for: Variable workloads, prototypes, development environments, and anything with unpredictable traffic patterns.

Trade-off: AWS enforces throttling limits, so during peak demand, your requests may be rate-limited.

2. Batch Inference

Batch Inference accepts a JSONL file of prompts via Amazon S3, processes them asynchronously, and returns results to your S3 bucket within 24 hours. The discount is substantial: a flat 50% off On-Demand rates for supported models from Anthropic, Meta, Mistral, and Amazon.

Best for: Document summarization pipelines, content generation jobs, evaluation runs, embeddings backfills, and anything else that does not need a synchronous response.

Trade-off: Not real-time. Some models, notably DeepSeek, are excluded from Batch.

3. Provisioned Throughput

Provisioned Throughput reserves dedicated model capacity measured in Model Units (MUs). You pay an hourly rate regardless of actual usage in exchange for guaranteed throughput, no rate limiting, and predictable monthly spend.

Discounts of 15% to 40% are typical with 1-month or 6-month commitments. PT is also the only way to host fine-tuned or imported custom models on Bedrock.

Break-even rule of thumb: PT becomes economical at sustained capacity utilization of roughly 80-85%. Below 60%, on-demand or batch will almost always be cheaper.

Best for: Production workloads with predictable, steady volume on a single model and strict latency requirements.

Trade-off: Capacity wasted during low-traffic hours is capacity you still pay for. Negotiate enterprise PT pricing directly with your AWS account team.

4. Cross-Region Inference

Cross-Region Inference is a routing capability, not a separate billing mode. It lets a request originating in one region automatically use capacity in another region during traffic spikes. Pricing is calculated at the source region’s rate with no cross-region surcharge.

Best for: Production applications that need resilience against regional capacity constraints.

Trade-off: Reduced control over data locality and potential latency variability, depending on the ultimate served request.

AWS Bedrock Token Pricing: Model-by-Model Reference Table

Below are representative On-Demand rates as of early 2026 for popular models in us-east-1. Always verify current pricing on the official AWS Bedrock pricing page before forecasting.

| Model

| Input ($/M tokens)

| Output ($/M tokens)

| Context Window

|

|---|

| Claude Opus 4.7

| $5.00

| $25.00

| 200K

|

| Claude Sonnet 4.6

| $3.00

| $15.00

| 200K (1M optional)

|

| Claude Haiku 4.5

| $1

| $5.00

| 200K

|

| GPT 5.4

| $2.50

| $15.00

| 1050K

|

| Mistral Large 2

| $2.00

| $6.00

| 128K

|

| Amazon Nova Pro

| $0.80

| $3.20

| 128K

|

| Amazon Nova Lite

| $0.06

| $0.24

| 300K

|

| Amazon Nova Micro

| $0.035

| $0.14

| 128K

|

| Amazon Titan Text Express

| $0.20

| $0.60

| 8K

|

| DeepSeek V3.2

| $0.62

| $1.85

| 128K

|

Nova Micro at $0.035 per million output tokens is roughly 143 times cheaper than Claude Opus 4.7 at $5.00 per million input tokens. For simple classification, structured extraction, or routing decisions, defaulting to a frontier model is one of the most expensive habits in production AI.

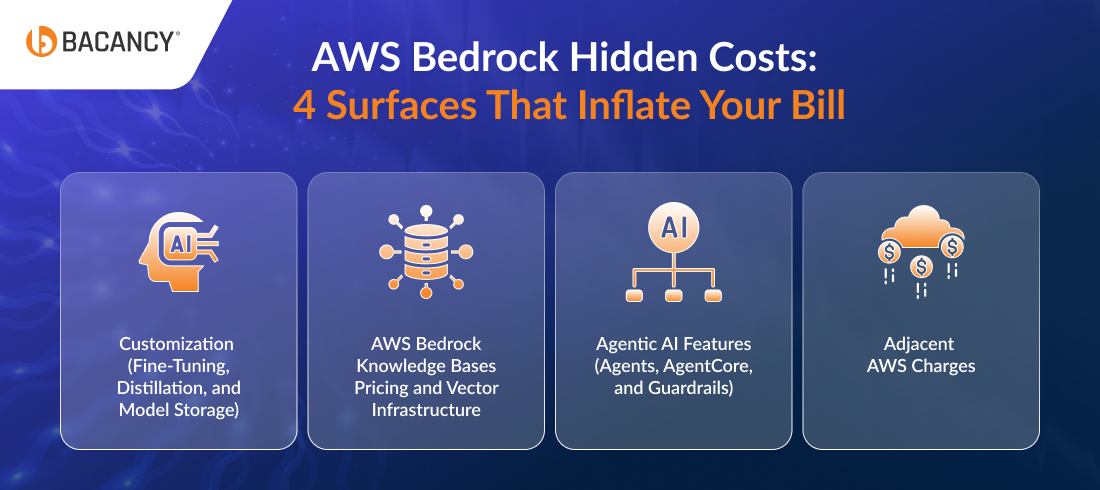

AWS Bedrock Hidden Costs: 4 Surfaces That Inflate Your Bill

Token rates are the visible part of the bill. These four AWS Bedrock hidden costs are where teams get blindsided.

Surface 1: Customization (Fine-Tuning, Distillation, and Model Storage)

Customizing a foundation model on Bedrock has three cost components:

- Training: Charged per token processed during training, calculated as dataset size multiplied by epochs. Rates vary by base model and are listed in the AWS console.

- Storage: Custom model weights are billed monthly at $0.02 to $0.10 per GB.

- Inference: Custom text-generation models cannot run On-Demand. They require Provisioned Throughput, with at least one MU available without commitment, billed hourly.

Reinforcement Fine-Tuning (RFT) is billed at an hourly training rate. Model Distillation charges synthetic data generation at the teacher model’s On-Demand rate, plus customization pricing for the small model.

Teams that planned these charges upfront as part of an AWS migration services engagement typically budget 20-25% more accurately than those who discovered them post-launch.

Surface 2: AWS Bedrock Knowledge Bases Pricing and Vector Infrastructure

Knowledge Bases for Amazon Bedrock have no separate fee, but the underlying components are billed in full.

The cost everyone gets surprised by inside AWS Bedrock Knowledge Bases pricing: Amazon OpenSearch Serverless, the default vector store, has a minimum baseline of 2 OpenSearch Compute Units (OCUs) at $0.24 per OCU per hour, which works out to roughly $345 per month even with zero query traffic.

The 2026 alternative worth knowing: Amazon S3 Vectors, launched in December 2025, is up to 90% cheaper than OpenSearch Serverless and supports trillions of vectors with sub-second latency. For new Knowledge Bases, S3 Vectors should be your default unless you have a specific OpenSearch dependency.

Other retrieval costs: embedding model inference (input tokens only), Bedrock Data Automation for parsing at $0.010 per page, and Amazon Rerank 1.0 at $1.00 per 1,000 reranking queries.

Surface 3: Agentic Features (Agents, AgentCore, and Guardrails)

Bedrock Agents themselves do not charge a per-invocation fee. The trap is token amplification: a single user query can trigger multiple internal model calls (thinking, searching, tool calling, summarizing), and you pay for every intermediate step. Agent workflows commonly consume 4 to 8 times the tokens you would estimate from looking at the user’s prompt and the final response.

Amazon Bedrock AgentCore is now billed across five separate consumption-based layers:

- AgentCore Runtime: Per-second vCPU and GB-hours for active resources

- AgentCore Gateway: Per MCP operation (ListTools, CallTool, Ping) and indexed tools

- AgentCore Memory: Per raw event for short-term memory, per record processed and retrieved for long-term memory

- AgentCore Identity: Per OAuth token or API key issued for non-AWS resources

- AgentCore Policy: Per authorization request, billed at $0.000025 per request

Guardrails charges per text unit (1,000 characters), with content filters and denied topics priced at $0.15 per 1,000 text units each. Bedrock Flows charges $0.035 per 1,000 node transitions, metered daily.

Surface 4: Adjacent AWS Charges

The line items most teams forget to budget:

- CloudWatch Logging ($0.50 per GB ingested) for prompt and response logging, which compounds quickly with verbose system prompts

- Amazon S3 for storing batch input and output, custom model artifacts, and Knowledge Base documents

- AWS KMS for encrypting data at rest, billed per request and per key

- VPC endpoints for private connectivity, billed hourly per endpoint

- Data transfer between regions and to the public internet, billed at standard EC2 rates (including for AgentCore Runtime, Gateway, Code Interpreter, and Browser as of November 1, 2025)

On a production bill, these adjacent charges often run 20% to 35% of the inference cost itself. And, handling these costs is not just about working around your Bedrock setup, it involves the AWS services running alongside it. And, if you want a detailed roadmap on AWS cost optimization, you can refer to our blog.

Need help auditing your AWS Bedrock spend?

Bacancy’s certified AWS engineers run cost audits, architecture reviews, and prompt optimizations for production GenAI workloads. Hire AWS developers with Bedrock experience to cut your bill without compromising quality.

Comparing Foundation Models on Bedrock: Cost, Context, and Trade-offs

Claude on AWS Bedrock pricing matches Anthropic’s direct API pricing exactly across Opus 4.5, Sonnet 4.5, and Haiku 4.5. The advantage of running Claude through Bedrock is its governance: VPC isolation, IAM-based access control, KMS encryption, and a contractual guarantee that prompts and outputs are never used to train models.

For regulated workloads, that posture is often worth more than chasing a $0.20 per million token discount elsewhere.

| Model Provider

| Ideal Workload

|

|---|

| Anthropic

| High-stakes reasoning, agentic workflows, code generation

|

| Meta

| Open-weight workloads, cost-sensitive reasoning

|

| Mistral AI

| European data residency, multilingual workloads

|

| Amazon

| High-volume tasks, classification, and embeddings, AWS native pipelines

|

| DeepSeek | Cost-sensitive reasoning, coding tasks

|

| OpenAI | GPT-native apps on AWS, low-cost open-weight inference |

AWS Bedrock Token Economics by Workload Pattern

The pricing model that wins depends less on the foundation model and more on where your tokens concentrate. Here are the 4 patterns we see most often, and where to look first when costs run hot.

1. Conversational Chatbot

Where spend concentrates: Input tokens. System prompts, persona instructions, safety rules, and conversation history accumulate across every turn. A chatbot handling 10,000 monthly active users on Claude Haiku 4.5 typically runs $200 to $800 per month in inference, with 60-80% of that bill coming from input.

Optimization lever: Prompt caching. The 1-hour cache TTL launched for Claude Sonnet 4.5, Haiku 4.5, and Opus 4.5 in January 2026 makes this dramatically more effective for stateful conversations. Cache reads cost roughly 90% less than standard input tokens.

2. RAG-Based Document Assistant

Where spend concentrates: Retrieval and long input contexts. Each query stuffs retrieved chunks into the prompt, often 5,000 to 20,000 tokens of context per request, and you pay full input rates on every call.

A typical mid-scale RAG assistant handling 50,000 monthly queries on Claude Sonnet 4.5 with a Knowledge Base will run $1,500 to $3,500 per month for inference, plus the OpenSearch Serverless floor.

Optimization levers: Retrieval reranking to send fewer but better chunks, context compression to shorten what gets passed in, and right-sizing the vector store (S3 Vectors over OpenSearch Serverless for new builds).

Where spending concentrates: A single user request that calls three tools in sequence will incur model invocations for the initial reasoning, each tool selection, each tool result interpretation, and the final synthesis.

Token consumption is typically 5 to 10 times the tokens visible in the user’s prompt and final answer. An agentic workflow handling 5,000 monthly runs on Claude Sonnet 4.5 with Agents and Guardrails commonly lands at $2,500 to $6,000 per month.

Optimization levers: Model cascade (route simple sub-tasks to cheaper models), loop budget caps on agent iteration depth, and structured output schemas to reduce verbose intermediate reasoning.

4. Batch Processing Pipeline

Where spend concentrates: Customization training, model storage, and the volume of input tokens at processing time. A nightly summarization pipeline on Llama 3.3 70B for 100,000 documents runs $200 to $600 per month at Batch rates, but a fine-tuned variant adds training and monthly storage costs on top.

Optimization levers: Distillation (smaller model trained from a frontier teacher), Batch mode (50% discount), and scheduled jobs aligned to business hours.

Building Your Own AWS Bedrock Cost Calculator

The fastest way to size a workload is a back-of-the-envelope AWS Bedrock cost calculator built from four inputs:

- monthly request volume

- average input tokens per request (including retrieved context and conversation history)

- average output tokens per request, and

- model choice

Multiply request volume by input tokens, divide by 1 million, and multiply by the model’s input rate. Repeat for output tokens. Sum the two.

For agentic workloads, multiply the result by 5 to 8 to account for token amplification. For RAG, add the OpenSearch Serverless or S3 Vectors floor. For Knowledge Bases at scale, layer in embedding model inference and reranking.

AWS Pricing Calculator handles the underlying services well, but does not yet model agent token amplification, so build that multiplier into your spreadsheet.

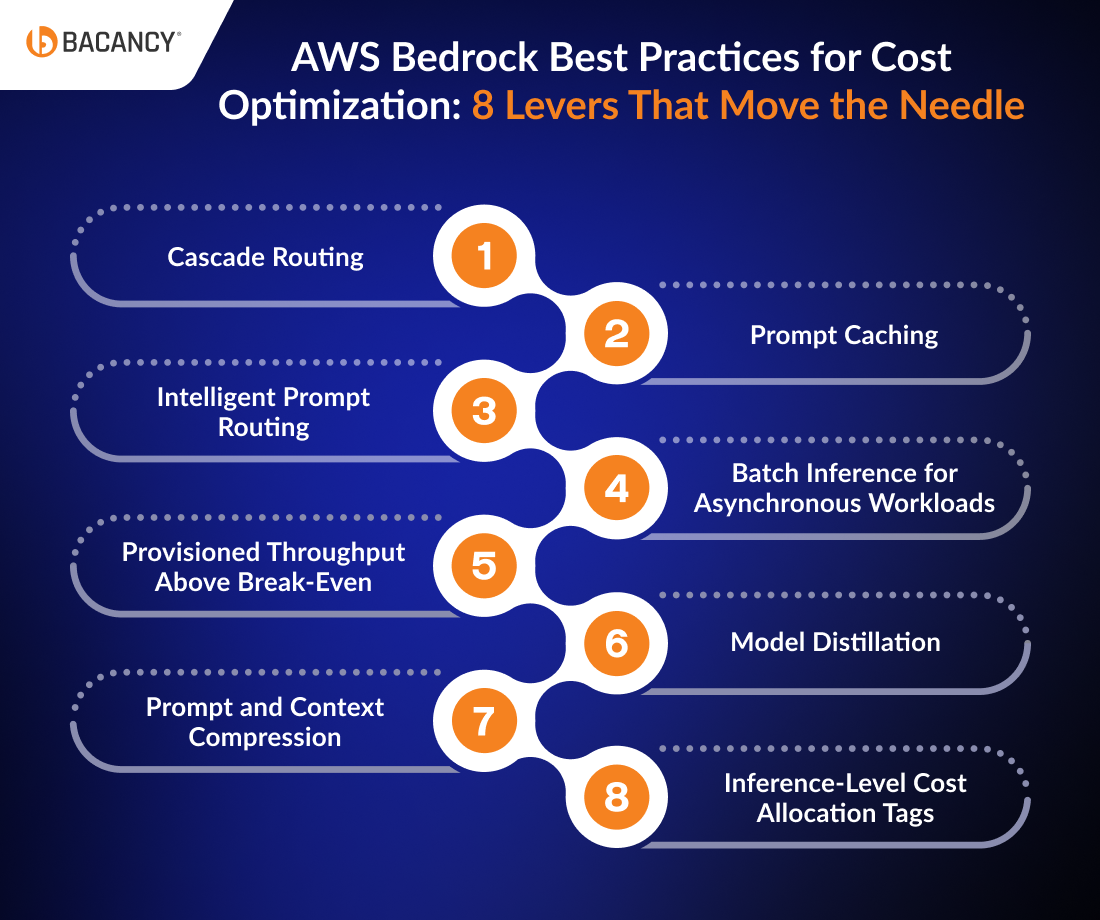

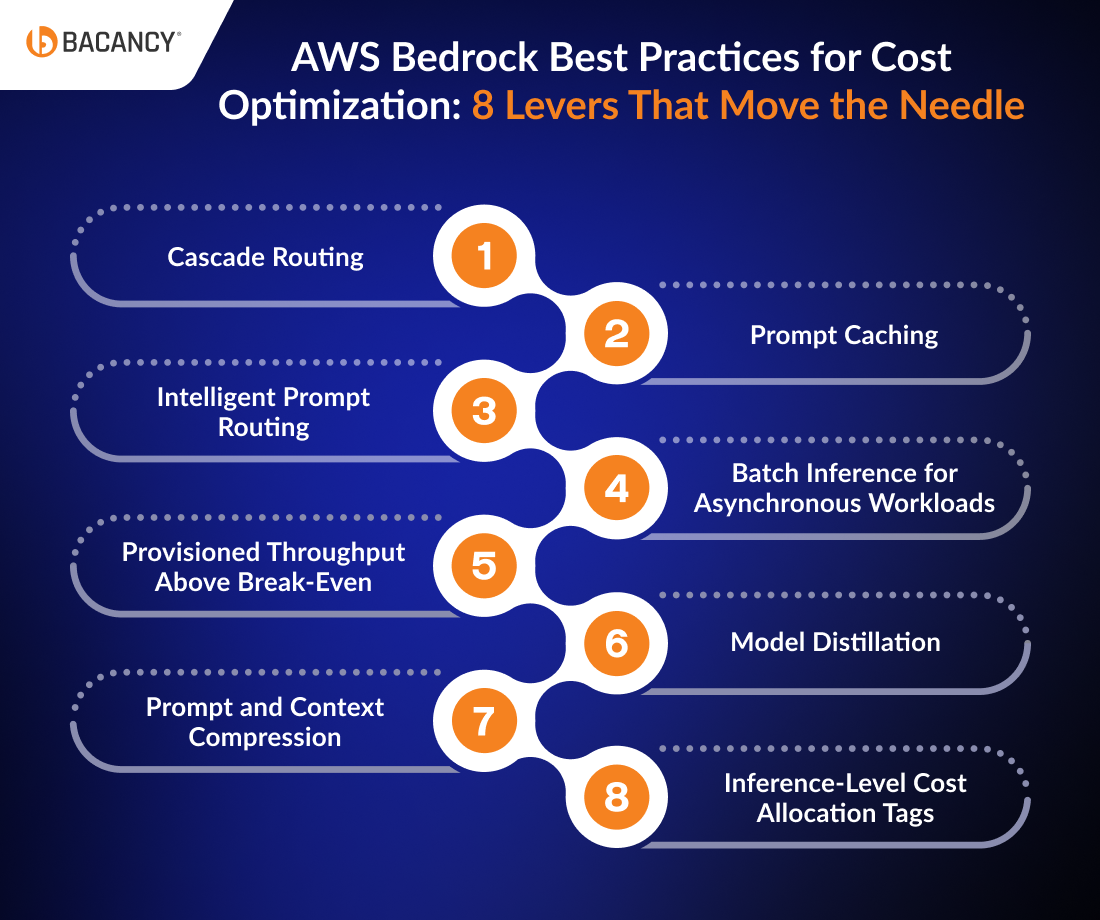

AWS Bedrock Best Practices for Cost Optimization: 8 Levers That Move the Needle

These cross-cutting AWS Bedrock best practices for cost optimization compound across workload patterns. Most teams already know one or two; the leverage comes from stacking them.

1. Cascade Routing

Route simple requests to a cheap model first. Escalate to a frontier model only when the cheaper response fails a confidence check. A typical implementation cuts blended cost-per-request by 40-60% without measurable quality loss on tasks like classification, simple Q&A, or routing decisions.

2. Prompt Caching

For repeated context (system prompts, RAG passages, few-shot examples), cached reads cost about 90% less than uncached input tokens. The first cache write costs roughly 25% above standard input rates, so the break-even sits at two cache reads within the TTL window. Track CacheReadInputTokens and CacheWriteInputTokens in CloudWatch to validate savings.

3. Intelligent Prompt Routing

Bedrock’s native Intelligent Prompt Routing automatically routes between models in the same family (for instance, Claude 3) based on prompt complexity. AWS reports cost reductions of up to 30% without quality loss, with no application changes required.

4. Batch Inference for Asynchronous Workloads

Anything not requiring a real-time response should default to Batch: nightly summarization, document enrichment, evaluation runs, embedding backfills, and content moderation queues. The discount is a clean 50% off on On-Demand.

5. Provisioned Throughput Above Break-Even

Move a workload to PT only when sustained utilization on a single model exceeds 80%. Below that, you are paying for idle capacity. Use the 1-month commitment first to validate the pattern before locking into a 6-month term, and negotiate directly with your AWS account team for enterprise discounts.

6. Model Distillation

Train a smaller model on outputs from a frontier teacher. It often delivers 90%+ of the quality at a fraction of the inference cost. Distillation is most effective for narrow, repetitive tasks like extraction, classification, and structured generation, and least effective for open-ended reasoning.

7. Prompt and Context Compression

Prompt compression trims unnecessary words from system instructions and few-shot examples (verbose prompts often have 30-40% input). Context compression summarizes retrieved chunks before they enter the prompt, useful for RAG pipelines where 60%+ of input tokens come from retrieval.

You cannot optimize what you cannot see. Tag every Bedrock invocation by team, product, environment, and feature. AWS Cost Explorer with the Amazon Bedrock service filter, plus per-model CloudWatch metrics, gives you the granular view needed to identify which workloads are driving spend and which are quietly burning budget on the wrong model.

How AWS Bedrock Compares to OpenAI Direct, Azure OpenAI, and Vertex AI

| Dimension | AWS Bedrock

| OpenAI Direct

| Azure OpenAI

| Google Vertex AI

|

|---|

| Per-token cost (Frontier)

| Matches Anthropic directly

| Lowest for the GPT community

| Slight Microsoft premium

| Competitive on Gemini

|

| Model breadth

| 60+ models and 15+ providers

| OpenAI only

| OpenAI only

| Google + selected partners

|

| Governance

| AWS, VPC, IAM, KMS native

| Vendor-managed

| Microsoft trust boundary

| Google trust boundary

|

| Training-data guarantee

| No training on customer data

| No training required (API tier)

| No training required

| No training required

|

| Enterprise contracting

| Single AWS contract

| Direct OpenAI contract

| Microsoft EA integration

| Google Cloud Contract

|

| Ideal buyer

| AWS-native enterprises

| OpenAI-first AI teams

| Microsoft-stack enterprises

| Google Cloud customer and multimodal-heavy

|

For a detailed comparison between the two most common starting points, see our blog on Amazon Bedrock vs ChatGPT.

For teams evaluating cloud providers for generative AI workloads, the comparison goes beyond per-token rates. Governance posture, model breadth, data residency, and ecosystem fit usually matter more than $0.50 per million tokens.

When to choose which:

- Choose AWS Bedrock when your data, compliance posture, and existing infrastructure live in AWS, and when model optionality matters more than picking a single vendor.

- Choose OpenAI Direct for teams that have standardized on GPT and want the latest models the day they ship.

- Choose Azure OpenAI for Microsoft-native enterprises where Office 365, Teams, and Active Directory integration are central.

- Choose Google Vertex AI for Google Cloud customers and for multimodal workloads where Gemini’s native video, audio, and document handling are differentiators.

How Bacancy Helps Teams Manage AWS Bedrock Pricing and Costs?

We begin by understanding how your current AI workloads consume resources. It helps uncover where costs are increasing, whether due to high token usage, over-reliance on premium models, or inefficient API calls. Based on this, the team aligns your architecture with a more cost-efficient strategy.

Here’s how Bacancy actively helps reduce and control costs:

- Usage Analysis & Cost Mapping: Identify high-cost models, regions, and workflows that impact your budget

- Smart Model Selection: Match each use case with the most cost-effective model and pricing option

- Token & Prompt Optimization: Refine prompts and responses to reduce unnecessary token consumption

- Workflow Efficiency: Eliminate redundant API calls and introduce caching to avoid repeated processing

- Cost Controls & Alerts: Set budgets, usage limits, and real-time alerts to prevent overspending

What sets this apart is that it doesn’t stop at a one-time audit. As your AI usage grows and pricing evolves, we keep refining the setup, so your costs stay predictable even as you scale.

Conclusion

AWS Bedrock pricing is a layered cost model where the per-token rate is often the smallest line item on a production bill. Knowledge Base infrastructure, agent token amplification, customization storage, and adjacent AWS charges routinely add 30% to 80% on top of the inference cost you projected.

The teams that get this right are the ones who plan for the total cost of ownership before they architect.

Get in touch with our AWS consulting services that help your teams with design, deployment, and optimization of generative AI apps on Amazon Bedrock. Our certified AWS engineers handle model selection, prompt and context optimization, FinOps tagging, and Bedrock architecture reviews so you can scale GenAI without scaling your AWS invoice.

Frequently Asked Questions (FAQs)

Both have different feature sets. For Anthropic models like Claude, AWS Bedrock pricing matches direct Anthropic API pricing exactly. For Meta Llama and other open-weight models, Bedrock can be 10-40% more expensive than alternatives like Groq.

No, Amazon Bedrock has no permanent free tier, and you pay from the first API call. New AWS accounts offer $200 in starter credits ($100 on signup, $100 for guided activities) usable across 200+ AWS services, including Bedrock. Credits expire after six months.

Amazon Nova Micro at $0.035 per million input tokens and $0.14 per million output tokens is among the cheapest text models. Google Gemma 3 4B and Mistral Voxtral Mini are also priced near the floor. For simple classification, routing, or structured extraction, these models deliver acceptable quality at a fraction of frontier-model cost.

Fine-tuning has three cost components: training (per token processed, calculated as dataset size multiplied by epochs), monthly model storage at $0.02 to $0.10 per GB, and inference. Custom text-generation models cannot run On-Demand and require Provisioned Throughput, billed hourly with optional 1-month or 6-month commitments.

No, Knowledge Bases for Amazon Bedrock have no separate fee, but the underlying vector store does. The default OpenSearch Serverless backend has a minimum AWS Bedrock Knowledge Bases pricing floor of roughly $345 per month (2 OCUs at $0.24 per OCU per hour), even with zero query traffic.

AWS provides a generic Pricing Calculator that supports Bedrock inference, but it does not currently model agent token amplification, prompt caching, or Knowledge Base retrieval costs end-to-end. A reliable AWS Bedrock cost calculator is best built as a spreadsheet that combines monthly request volume, average input and output tokens, model rates, agent multipliers, and vector store floors.