Top 27 Python Libraries for Data Science in 2026 – Chosen by Data Experts Worldwide

Last Updated on February 9, 2026

Quick Summary

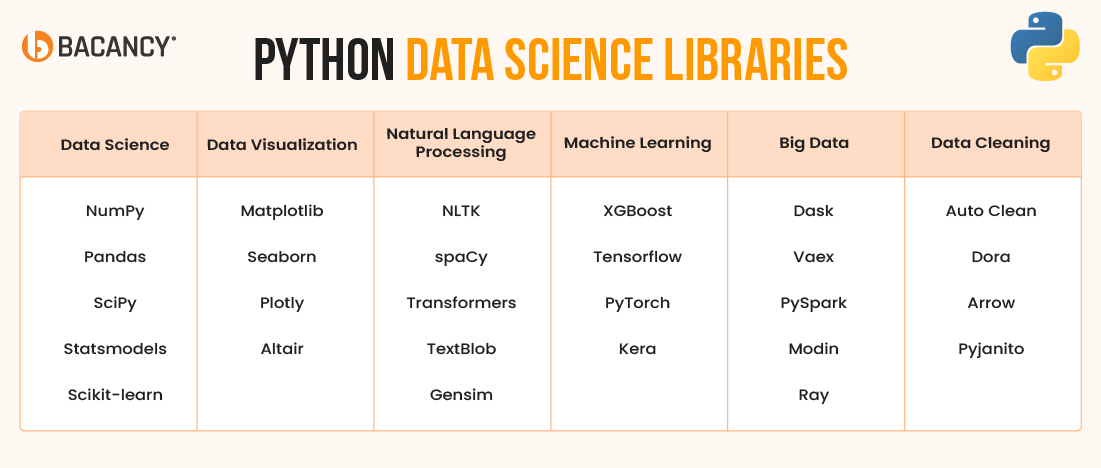

The best Python libraries for data science can help your data teams move faster, cut errors, and build smarter models. This blog brings together 27 important Python libraries for data science that every data leader should know. If you’re leading data science teams or building analytics platforms, this guide shows you what to use, when to use it, and how to make the most of Python.

Table of Contents

What do high-performing data science teams have in common?

A streamlined Python stack that connects the right tools to the right outcomes. Python stands as the most used language in data science and machine learning, backed by a vast ecosystem of libraries that support every part of the data pipeline.

Python has a specialized library for almost every task, from data preparation to model deployment. With over 137,000 libraries available, the challenge lies in selecting the ones that deliver real value.

Not all libraries offer the same reliability or efficiency. In this guide, you will find 27 essential Python libraries for data science in 2026, organized by use case. This list will help your team cut through the noise and focus on tools that drive results.

These core libraries give you the essential tools for handling data, performing analysis, and building a solid data science workflow from the ground up.

NumPy (Numerical Python) is the core library for high-performance numerical and scientific computing in Python. At its core is the ndarray, a fixed-type, multi-dimensional array that allows fast, memory-efficient operations for vectors, matrices, and tensors.

This Python data science library is written in C for optimal speed and outperforms native Python structures, especially in large-scale calculations. It supports various numerical functions, including linear algebra, Fourier transforms, and random number generation.

NumPy is essential for simulations, numerical analysis, and preprocessing in machine learning workflows. As the computational backbone of libraries like Pandas, SciPy, and Scikit-learn, Python NumPy is widely used across industries from engineering and physics to AI and quantitative finance.

Pandas provides a robust, high-level abstraction for structured data through its DataFrame and Series objects. It is designed for fast, flexible, and intuitive data analysis and manipulation, whether you’re cleaning raw CSVs, joining tables, handling time series, or building complex feature pipelines.

Built on top of NumPy, Pandas is ideal for Exploratory Data Analysis (EDA), business analytics, and preprocessing before machine learning. Its seamless integration with Excel, SQL, and formats like JSON or Parquet makes it a go-to tool for engineers and analysts.

Pandas is the standard library for working with tabular data and serves as a mainstay within Python libraries for data science.

SciPy is an advanced Python library for scientific computing built on top of NumPy. It adds tools for math-heavy tasks like optimization, statistics, integration, and signal processing. You can solve equations, analyze data, and run simulations using its ready-to-use modules like scipy.optimize or scipy.stats.

SciPy is used in fields such as physics, finance, and engineering. It is one of the most important Python libraries for data science, where precision and complex math are required.

Statsmodels is a Python library for statistical analysis that helps you test ideas, estimate values, and explain results clearly. It supports models like linear regression, time series like Autoregressive Integrated Moving Average (ARIMA), Seasonal Autoregressive Integrated Moving Average (SARIMA), Generalized Linear Models (GLMs), and survival analysis.

You get detailed outputs, such as p-values and confidence intervals, which are helpful in research, economics, and any field that needs clear, explainable results. As one of the more beginner-friendly Python libraries for data science, Statsmodels is ideal when transparency and interpretability matter most.

Scikit-learn is a Python library for machine learning with simple APIs for building models and analyzing data. It is among the top Python libraries for data science because it supports tasks like classification, regression, clustering, and dimensionality reduction using a clean, consistent API.

It also offers helpful tools like cross-validation, pipelines, feature selection, and hyperparameter tuning. Scikit-learn works best on structured (tabular) data and is often the first choice for building fast, explainable, and reliable ML models.

Hire Python developers with proven experience applying the right libraries to solve real-world data science challenges.

These Python Data Visualization libraries turn complex information into clear, interactive visuals that reveal patterns, trends, and insights. It also ensures your data and app are easier to interpret and act on.

Matplotlib is a battle-tested Python library for data visualization. Its stateful interface mimics MATLAB, while the object‑oriented API maximizes fine‑grained control of every axis, tick, and annotation. With support for line, bar, scatter, error, and polar plots, Matplotlib powers dashboards, academic papers, and production reporting alike.

Seaborn is a Python library that makes it easy to create attractive statistical charts like heatmaps and pair plots. It works well with Pandas and is great for quick data exploration in just one line. You can still customize charts using Matplotlib if needed.

Plotly lets you create interactive charts in Python, including 3D graphs and real-time visuals. It has features like hover info, zoom, and built-in responsive layouts. You can export charts to HTML or use them in web apps and dashboards.

Altair is a Python library for creating clear, interactive charts using simple, high-level code. You can build complex visuals in just one line with its Vega‑Lite system. It also makes it easy to export charts to JavaScript for use in web apps.

Python offers powerful, result-driven libraries to handle language data at scale. These Python libraries for NLP help teams analyze, extract, and understand text with speed, accuracy, and reliability.

The Natural Language Toolkit (NLTK) is a Python library for basic NLP tasks like tokenizing, stemming, and parsing. Thanks to its simple design and helpful tutorials, it’s great for learning and testing language rules. While NLTK is not the fastest, it’s perfect for teaching and small projects.

spaCy is a fast and efficient NLP library for tasks like segmenting text, entity recognition, and parsing. It supports 60+ languages and works well with PyTorch or TensorFlow. With a clean API and built-in tools, it’s great for production-level NLP projects.

The Transformers library by Hugging Face gives access to pre-trained models like BERT and GPT for text, images, and speech. You can load and fine-tune powerful models in just a few lines. It handles complex steps, such as input preparation and hardware setup to speed up deployment.

TextBlob is a beginner-friendly tool for basic text tasks like sentiment analysis, part-of-speech tagging, and noun phrase extraction. It’s easy to use and works well for quick demos or simple dashboards. You can get results with just a few lines of code.

Gensim is a Python library that finds patterns and topics in large text data using models like Latent Dirichlet Allocation (LDA), doc2vec, fastText, and word2vec. It processes data in streams, so you don’t need to load everything into memory. You can also update models over time, which is useful for news or social media analysis.

The following user-tested Python Machine Learning libraries empower real-world AI systems and support accurate, scalable, and production-ready solutions that teams trust.

XGBoost is a powerful library for getting high accuracy on structured data using gradient boosting. It handles large datasets efficiently with features like GPU support and out-of-core training. With built-in tools like cross-validation and early stopping can help you speed up model tuning.

TensorFlow is a complete open-source platform with tools like Keras, TensorBoard, TensorPrivacy, and TFX for building and managing models. It uses static graphs to easily deploy anything from a Graphical Processing Unit (GPU) to mobile devices.

This Machine Learning Python library includes features like auto-differentiation and SavedModel, which make it simple to scale across cloud or edge environments.

PyTorch uses eager execution, which allows you to execute and debug model components step by step using standard Python debugging tools. It has built-in tools for calculating gradients and building model layers in a flexible, block-like way.

With add-ons like PyTorch Lightning and TorchServe, it’s easy to move from research to real-world deployment.

Keras makes deep learning easier by turning complex models into simple, readable code. Its functional API and built-in tools, such as early stopping and learning rate control, allow you to build advanced networks quickly. Since TensorFlow 2, Keras has been fully integrated and ready for both beginners and production use.

Simplify your tech decisions with Python consulting services that guide you in selecting, integrating, and optimizing libraries for performance, accuracy, and growth.

As data volumes grow, traditional tools fall short. These Python libraries for big data are built to handle big data across clusters with speed and efficiency.

Dask breaks large NumPy and Pandas tasks into smaller parts that run in parallel on a laptop, cluster, or Kubernetes cloud. Its DataFrame and Array tools follow the same style as Pandas and NumPy, which makes it easy to use. The smart scheduler moves tasks around to keep things running quickly and smoothly.

Vaex loads data lazily as memory‑mapped Arrow files, which allows a single machine to process billions of rows per second. Its expression system optimizes on‑the‑fly aggregations without materializing copies, while interactive histograms remain snappy. Also, Vaex is similar to Panda.

PySpark connects Python with Apache Spark’s powerful engine, so you can use Python code to work with big data. It supports tools like Spark SQL, DataFrames, and MLlib for machine learning. PySpark is great for handling huge amounts of data, running complex graphs, and processing live data streams across multiple machines.

It is one of the most robust Python libraries for big data. Moreover, PySpark developers can optimize smartly and improve query performance to keep running even if some machines fail.

Modin speeds up your existing Pandas code by using all available CPU cores or a Ray cluster without requiring any code changes. It distributes the workload efficiently, which allows your system to handle larger datasets while keeping memory usage low. You still get the familiar Pandas experience but with significantly better performance.

Ray is a Python library that lets you run tasks in parallel across multiple machines using a simple API. It includes tools for tuning models, serving them, and performing reinforcement learning. Ray also speeds things up by efficiently sharing data between tasks.

Clean data is a must before you model or visualize anything. These libraries help detect errors, fill gaps, and structure your data for reliable insights.

AutoClean is a Python library that automatically detects and handles missing values, outliers, and categorical variables in pandas DataFrames. It applies imputations and encodings following ML best practices, speeding up dataset preparation for projects like Kaggle competitions.

Dora automates exploratory data analysis, generating visual summaries, statistical tests, and feature engineering ideas. Its report ranks variable importance and flags multicollinearity, aiding rapid hypothesis building.

Apache Arrow defines a columnar, in‑memory format that underpins pandas‑2, Parquet, and numerous ML accelerators. It is also known as PyArrow APIs, which allow zero‑copy reads, schema evolution, and vectorized UDFs across C++, Rust, and Java.

Pyjanitor extends pandas with verbs like clean_names(), remove_columns(), and drop_na() to create readable, pipe‑friendly cleaning pipelines. Inspired by the R janitor package, it encourages reproducible, declarative data‑prep scripts.

Whether you create machine learning models, run statistical analyses, or visualize insights, selecting the right Python libraries can shape the success of your data science project. Here’s a structured way to choose the ideal ones:

Define the goal of your project. To narrow down the right tools, focus on tasks like data analysis, machine learning, NLP, visualization, or deep learning.

Select libraries with active communities and consistent updates. Use Pandas for data manipulation, Scikit-learn for ML, and Matplotlib for basic charts.

Choose libraries with clear documentation and easy-to-follow examples. Python libraries like Pandas, Seaborn, and Scikit-learn support faster adoption and smoother implementation.

Confirm that the library works with essential tools like Jupyter, SQLAlchemy, TensorFlow, or PySpark. You need to ensure it supports your Python version and tech stack.

Pick tools that handle large datasets efficiently. Dask and RAPIDS improve performance, while Prophet and Statsmodels suit time series projects.

Use Scikit-learn, XGBoost, or LightGBM for machine learning and choose TensorFlow, PyTorch, or Hugging Face for implementing deep learning. You can also opt for spaCy or NLTK for NLP.

Check for open-source licenses like MIT or Apache 2.0 to allow enterprise use. Avoid tools with restrictive or unclear legal terms.

Test the library with small datasets. Before full adoption, assess performance, ease of use, and API structure.

Python continues to lead the way in data science because of its powerful, easy-to-use libraries. Whether you want to clean data with Pandas, build models with Scikit-learn, or work with deep learning tools like TensorFlow or PyTorch, these libraries help solve real-world problems across industries.

However, knowing which tools to pick is only the first step. The real impact comes when the right team applies them to the right problem. That’s where Bacancy comes in.

Our team builds data-driven products that deliver real value. We have worked with fast-growing startups and global enterprises to create scalable, smart, and future-ready solutions. With a strong grip on the Python ecosystem, our Python development company professionals ensure that every library serves a clear purpose and fits your business goals.

Why Bacancy?

Ready to move from potential to performance? Get in touch with Bacancy and work with a team that understands your goals, speaks your language, and delivers beyond expectations.

You can use Dask, Vaex, or PySpark when your data is too large for Pandas. These libraries handle big data by running tasks in parallel and across multiple machines.

Yes, most libraries in the Python ecosystem are designed to work together. For example, you can prepare data with Pandas, visualize it using Seaborn, and model it with Scikit-learn or XGBoost.

In 2026, top libraries include Pandas, NumPy, Scikit-learn, TensorFlow, PyTorch, Plotly, spaCy, and MLflow. These tools support a wide range of data science needs, from EDA to advanced machine learning and deployment.

Follow GitHub trends, join communities on Reddit, Stack Overflow, or Slack, and subscribe to newsletters like Python Weekly or Towards Data Science. These keep you updated with the latest tools and updates.

Your Success Is Guaranteed !

We accelerate the release of digital product and guaranteed their success

We Use Slack, Jira & GitHub for Accurate Deployment and Effective Communication.