12 Prompt Engineering Best Practices To Master For AI Excellence

Last Updated on January 9, 2026

Quick Summary

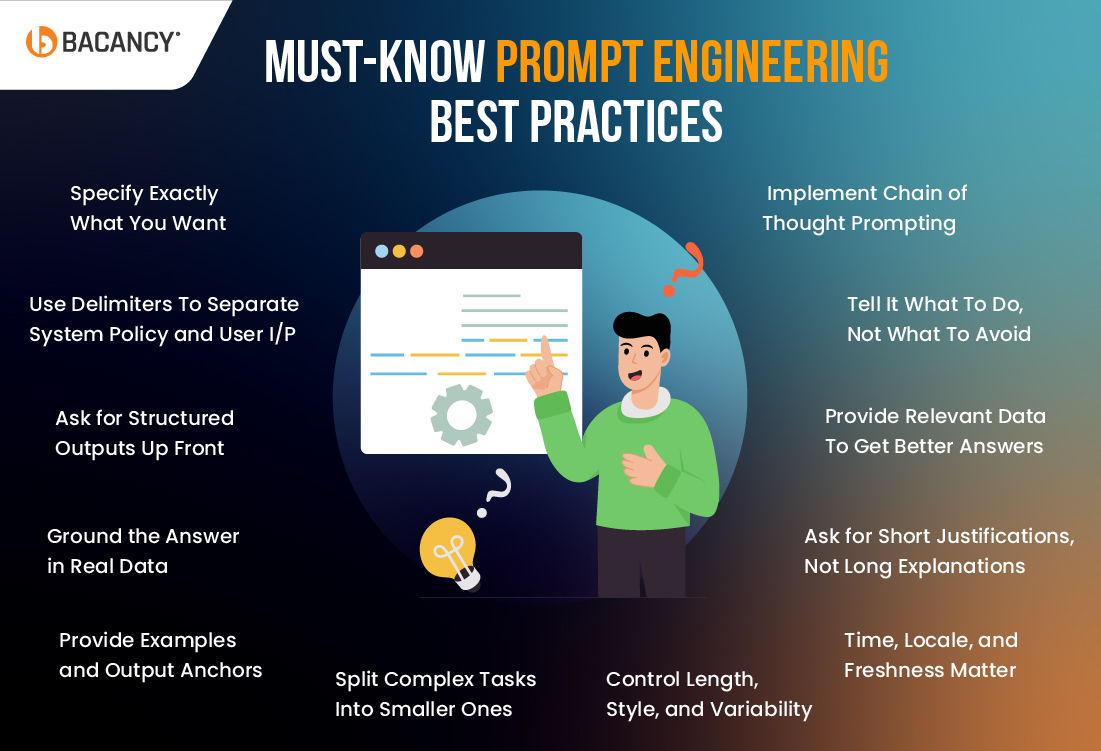

This blog post covers the essentials of prompt engineering for organizations that want to optimize their AI models and enhance their performance. We will talk about the significant impact of prompt engineering on AI model performance. Also, we will explore 12 prompt engineering best practices which you should not miss if your goal is to optimize your prompts, improve AI outputs, and upscale AI model efficiency.

Table of Contents

Most innovation-centric teams today are juggling multiple AI models, LLMs, and rising stakeholder expectations, while they experiment with different AI inputs and outcomes; do you feel like you’re in the same boat?

What if the fastest way to improve AI outcomes for your organization this quarter isn’t about investing in bigger models, but having tighter control over the words you feed it? The solution is quite simple: Better prompts for your AI models drive better outcomes and, thereby, efficient decisions and growth. That’s exactly where Prompt Engineering Best Practices hops in, turning prompt design into a structured process you can test, refine, and scale.

Adoption is no longer the question. As per a recent Mckinsey report, the use of generative AI by organizations accelerated significantly, from 33% in 2023 to 71% by late 2024. This showcases a rapid growth of GenAI usage that outpaced process maturity in many stacks.

So the question for most organizations and AI-driven enterprises isn’t “should we use more capable, intelligent AI models” but rather “how can we make AI models more reliable, safe, and cost-effective at scale?”

Let’s move to the next section to walk you through the most crucial prompt engineering best practices you can apply right away to get more accurate responses, reduce errors, and maximize the value of your AI models and prompts.

Prompt engineering, in simple terms, is the practice of carefully crafting instructions specific to what outputs you need to achieve, and ingesting them into the AI models to obtain the required outcomes. When we talk about prompt engineering, it’s really more than just inserting your random thoughts into the generative AI models; it’s about setting the right roles, goals, constraints, and examples so that the AI understands exactly what you need.

However, if you are using generative AI models like GPT, Gemini, or Claude, you might wonder why understanding prompt engineering is so crucial. Why does it matter so much? Isn’t it just like having a casual conversation with AI, and hoping it understands just like your BAE? Well, that is not the case here.

The AI doesn’t just “know it out of the dark” what you want; it requires appropriate guidance – clear, structured, and purpose-driven instructions to truly deliver on your business needs.

Here’s why prompt engineering matters so much for AI models and users to understand their impact:

One thing is clear: even small tweaks like changing word order or omitting crucial context can actually impact the quality and accuracy of the AI-generated answers or outcomes. And, such tiny shifts can affect everything from costs and latency to the time spent fixing up poor outputs.

For instance, let us take a real-life example for better understanding.

If you ask an AI model to “write a reply to a refund request,” it may give a vague, generic answer. The output will likely not be upto your expectations.

However, instead of just writing a simple prompt like above, you can use a clearer prompt like “You are a customer service agent. The customer is requesting a refund for an order placed 45 days ago, which is beyond our refund policy. Craft a polite refusal message in under 100 words.”

Such a prompt will guide and ensure the AI model delivers a precise, policy-compliant response.

Well-crafted prompts don’t just improve response quality, but they also cut down manual reviews and speed up operations. Businesses or development teams using structured prompts see up to 15 to 20% higher first-contact resolution and 20 to 30% faster handling times, which directly boosts customer satisfaction.

Now that we have understood the importance of prompt engineering, let us go through the crucial prompt engineering best practices that will help you get the most out of your AI systems.

If you have ever used an AI model like GPT or Cursor AI and received vague or off-target results, you must understand that prompt specificity is key. The more precise and detailed your prompt is, the more likely you are to get a useful answer. This is especially true when you’re working with complex systems or business-critical decisions.

It’s true that vague asks create vague outputs. So the key is to define the “What, Why, and How” of something you need in the prompt itself.

For example, instead of just typing in a basic prompt like “Summarize this report”, you can try the following prompt for specificity and better outcomes:

“Summarize this 20-page churn report for a board deck in 3 bullets: (1) biggest churn drivers, (2) segments most at risk, (3) top 2 fixes. Keep it under 60 words.”

Here, the explicit audience (board), format (bullets), and constraints (word count, focus) make outputs far more reliable.

When everything gets jumbled into one long instruction, AI models can misinterpret what belongs where. The best way is to separate different parts of your prompt with clear markers or delimiters. Think of it like giving the AI a clean blueprint: system rules, task details, and user input.

For example:

System rules: Always respond in a professional tone.

Task: Summarize the following customer feedback in 3 key insights.

User: I found the onboarding confusing, and the setup steps were unclear.

This way, the AI knows what a rule is, what the task is, and what the actual content is.

Free-form text is fine for brainstorming, but when you need reliability, ask for structure like tables, lists, or JSON. This is one of the top prompt engineering best practices to implement when your end goal is to make the output easier to use in reports or software.

For example, instead of using prompts like “Compare Tool A and Tool B.”

Try a better prompt like “Compare Tool A and Tool B in a 2-column table with rows for Price, Features, Pros, and Cons.”

So that you can get the outcomes that are clean, scannable, and ready to drop into your slides.

AI can sound really convincing, but sometimes AI models just mix things up, due to the poor prompts, incorrect or irrelevant data, or contextual misunderstanding. To avoid this, you should always give it relevant, updated, and real-time data to work with or you can just ask GenAI models to cite the sources for the generated outcomes. This ensures the answer or outcomes stay factual and trustworthy.

Use a prompt like, “Based on the attached file of sales data, write a summary highlighting the top-selling product, the biggest drop in sales, and the overall trend. Include the numbers in your response. Also, if you can’t find the right information, answer with insufficient data.”

This way, your output is anchored in evidence, not guesses. It’s the best way to reduce AI hallucinations and allow stakeholders or decision makers to jump straight to reliable data and sources.

Incorporating a couple of short, high-quality examples into your prompt can beat paragraphs of instruction. Show one “good” sample for the desired output to steer AI’s response in the right direction.

This prompt engineer best practice is particularly useful for complex and creative tasks where the desired output might be cryptic.

It would be great if you could provide sample texts, data formats, reference templates or documents, code snippets, or real-time graphs and charts in the prompt itself. Such examples or references can shape AI’s tone, structure, and content, that results in less editing and fewer back-and-forths.

Partner with a trusted prompt engineering company to optimize your AI model’s performance and drive business success. Let’s upscale your AI strategy together!

When you are dealing with complex processes or want to automate some larger tasks, it will be better not to ask AI to do everything at once. The reason is that asking AI models so many things to do at once often leads to messy results. So, it is better to split things into smaller steps. This gives you more control and makes the output easier to refine.

For example:

Step 1: “Create a 5-bullet outline for the incident report for a specific scenario or organizational task.”

Step 2: “Write the executive summary (<150 words) that highlights all the crucial points.”

Step 3: “Finally, propose two mitigation actions with owners.”

To operationalize such multi-step AI workflows at scale, many organizations choose to hire AI developers who can design, integrate, and optimize AI-driven systems across teams.

If you need accountability for the AI response, you need to understand why AI picked an answer, any specific one you encountered. One of the prompt engineering best practices to implement is to ask for a brief rationale.

For example, craft a prompt as follows:

“Recommend the best project management tool for a 10-person startup. Give your answer and then add 2 short bullets explaining why and how each tool can be helpful.”

This way, you get both the decision and the reasoning, without drowning in extra detail. It’s great for prioritization, triage, and policy calls. Reviewers can scan the summarization quickly, and you still prevent the model from meandering into speculative logic you can’t verify.

It’s easy for an AI model to run off track if you don’t set clear boundaries. Define how long you want the response to be, what tone you prefer, and the level of variability you are comfortable with.

For instance, if you need concise, actionable insights, don’t leave room for vague answers. You can do this by setting limits on the word count, specifying a formal or casual tone, and asking for consistency in style.

Take below prompt for an example:

“Write a Python script to implement a basic image classifier using transfer learning with TensorFlow. Keep the code concise (under 100 lines), and include comments to explain key sections, such as data preprocessing, AI model creation, and evaluation.”

This avoids overly long answers and ensures the content and responses generated by AI models fit your audience perfectly. Hence, you can ensure the response is specific and actionable, which makes it easier to integrate AI-generated solutions into your project.

AI models perform best when they operate on the proper context. If you are looking for up-to-date information or something specific to a location, make sure to specify the timeframe and geography.

Without appropriate and relevant data, AI models or systems might otherwise give outdated or irrelevant responses. For instance, if you are analyzing a product’s success in a particular region, the AI will need up-to-date local market data to give you an accurate insight. So always add temporal markers and mention the location explicitly.

An example of a time-sensitive prompt is,

“What are the latest sales trends in the UK for Q3 2025?” Or

“Summarize the top 3 cybersecurity threats for financial services companies in 2025. Keep it relevant to the U.S. market. Prefer sources from the last 30 days.”

Such a prompt engineering best practice will give you answers grounded in reality, not outdated references.

Don’t just ask AI to “give me insights.” Provide the data it needs to base those insights on. Whether it’s a dataset, a report, or specific statistics, feeding the AI relevant information from the get-go ensures its response is built on facts.

To get sharper, real-world, and reliable outcomes from AI models, you can share snippets, tables, or KPIs directly in the prompt. You can get a better understanding from the following prompt example:

“Here’s last quarter’s pipeline data from August 2025 by segment. Find the top-performing segments, the 2 weakest links, and suggest one experiment per segment.”

Due to the clear, structured inputs, the result will be far more actionable.

It is often easier to tell an AI model what you want than what you don’t want. Instead of saying, “Don’t give me irrelevant details,” you can choose a simpler approach and guide it with clearer instructions, like the prompts below.

“Focus on the key points or KPIs while generating an outcome.”

Instead of “Don’t be too technical”, you can try “Explain for finance leaders with minimal jargon.”

Use “write 90 to 120 words of email, friendly and direct” rather than “don’t write too long an email.”

Positive instructions reduce guesswork and make results more consistent across users. You will spend less time shaping tone and more time polishing content or responses that people can use.

To enhance the reasoning process of AI models, you can break your complex queries into a chain of thoughts for the LLMs to follow. Instead of simply asking for an answer, guide the AI through a logical flow:

Prompt examples:

“First, list the factors to consider, then evaluate them against some specific aspect, and finally, provide a recommendation.”

“Define the target market for the new product and the key problems it aims to solve, then evaluate the competitive landscape to identify gaps or unique opportunities, and finally, propose a product concept and feature set that would stand out in this market, providing clear value. Additionally, provide me 3 result-centric product scaling strategies.”

This technique forces the model to think step-by-step, which helps it generate more accurate, well-reasoned conclusions. So users can better understand the logic employed and the reliability of the response.

The chain of thought prompting can be particularly useful when tackling complex problems or when the reasoning process itself is as crucial as the AI response. You can certainly get a deeper level of problem-solving capabilities from such AI prompts.

Having proven domain expertise and experience in prompt engineering, Bacancy has experts who specialize in crafting precise prompts and implementing prompt engineering best practices that can help organizations unlock the full potential of AI models, increasing accuracy, reliability, and efficiency of AI outcomes. Here’s how we can help:

With Bacancy, you can trust that your AI systems and Generative AI models will deliver reliable, high-quality results. If you are looking to enhance your AI model’s performance or improve workflow automation efficiency, hire prompt engineers to take your AI-powered systems to the next level.

Effective prompt engineering directly impacts the accuracy, relevance, and speed of AI responses. It helps AI models to better understand context, reduce ambiguity, and generate more actionable insights, which in turn leads to improved performance in business operations.

By optimizing the length, style, and structure of prompts, you can reduce token usage, minimize processing time, and cut costs. Efficient prompt engineering ensures that AI models respond quickly and accurately without unnecessary resource consumption.

Some of the most common challenges in prompt engineering include designing prompts that are too vague or too specific. These challenges fail to account for the model’s limitations, resulting in ambiguous or irrelevant outputs. However, consistent monitoring and iteration of prompts can help overcome these challenges and prevent a negative impact on AI’s overall performance and efficiency.

Your Success Is Guaranteed !

We accelerate the release of digital product and guaranteed their success

We Use Slack, Jira & GitHub for Accurate Deployment and Effective Communication.