Quick Summary

This blog post explores the key differences between ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform), the two popular approaches for data integration. Comparing ETL vs ELT on diverse aspects, we help you understand how each method processes data, highlight their pros and cons, and guide you in choosing the best fit approach for your organization needs and data infrastructure.

Table of Contents

Introduction

A few months ago, our team at Bacancy was working with a fast-growing healthcare client, a digital health platform focused on unifying patient records, device data, insurance APIs, and lab results into one innovative, intuitive dashboard for care providers.

On the surface, it sounded like a typical enterprise data integration project. But once we dove in, we realized: this wasn’t just another data pipeline; this was a moving puzzle of compliance, real-time needs, and scale.

We had to pull data from multiple EHR systems, some of which sent structured files nightly, while others streamed semi-structured data throughout the day. On top of that, sensitive information like patient vitals and insurance records had to be handled with HIPAA-grade care.

Initially, we leaned toward our trusted, battle-tested ETL setup. Extract the data, transform it to fit our schema, and then load it into the warehouse.

But it didn’t take long before the cracks started to show.

Minor schema changes from one source would break the entire pipeline

Transformations were tightly coupled with ingestion logic, slowing iteration

Our analytics team was constantly waiting for data that had already arrived

That’s when our CTO, “Binal Patel” called for a rethink.

“What if we flip the model, and go ELT instead?”

It was a simple question that led to a deeper architectural conversation.

One that forced us to pause and ask ourselves:

When is ETL still the right choice? And when does ELT make more sense, especially in complex, high-volume, regulated environments like healthcare?

This blog is a reflection of what we learned through that journey. If you’re a CTO, CIO, or product leader working on a data-intensive product, especially in healthcare or other compliance-heavy industries, this might help you make a decision that saves you months of rework down the road.

ETL vs ELT: Not Just Technical Terms, But Strategic Choices

When we sat down to re-evaluate our data strategy for the healthcare client, we quickly realized something: ETL and ELT are more than just acronyms; they represent two entirely different mindsets for building data pipelines. The decision goes beyond tools or file formats. It shapes how your architecture scales, how your teams collaborate, and how your business adapts over time.

Here’s how we reframed the two approaches for our leadership and product stakeholders:

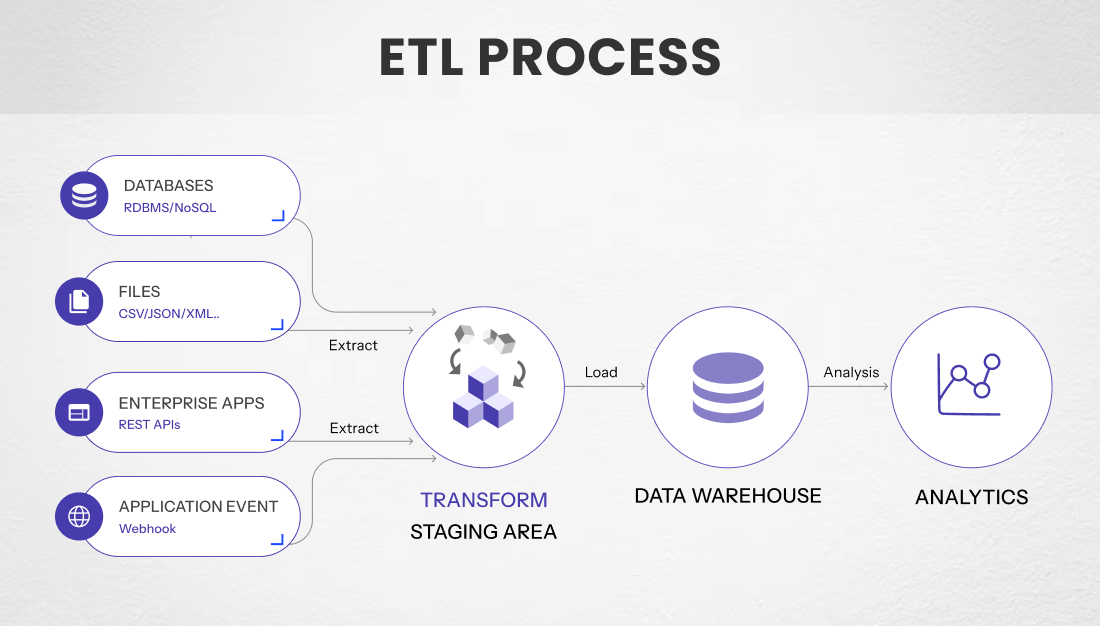

ETL has been the traditional approach for decades, and for good reason. It involves extracting data from source systems, transforming it into a clean, structured format before it ever hits your destination warehouse, and then loading it into a central system for use.

This model works well when data sources are stable, transformations are complex, and compliance demands that sensitive data be scrubbed or masked upfront.

Key strengths of ETL:

Great for structured, predictable data from CRMs, EHRs, etc.

Enables strict pre-load validation and anonymization; useful for regulated industries like healthcare

Offers tighter control over data quality before storage

But here’s where it gets tricky:

Any source-side schema change can break the pipeline

Dev teams end up owning all transformation logic, making iterations slower

Slower data availability; it’s clean, but often not real-time

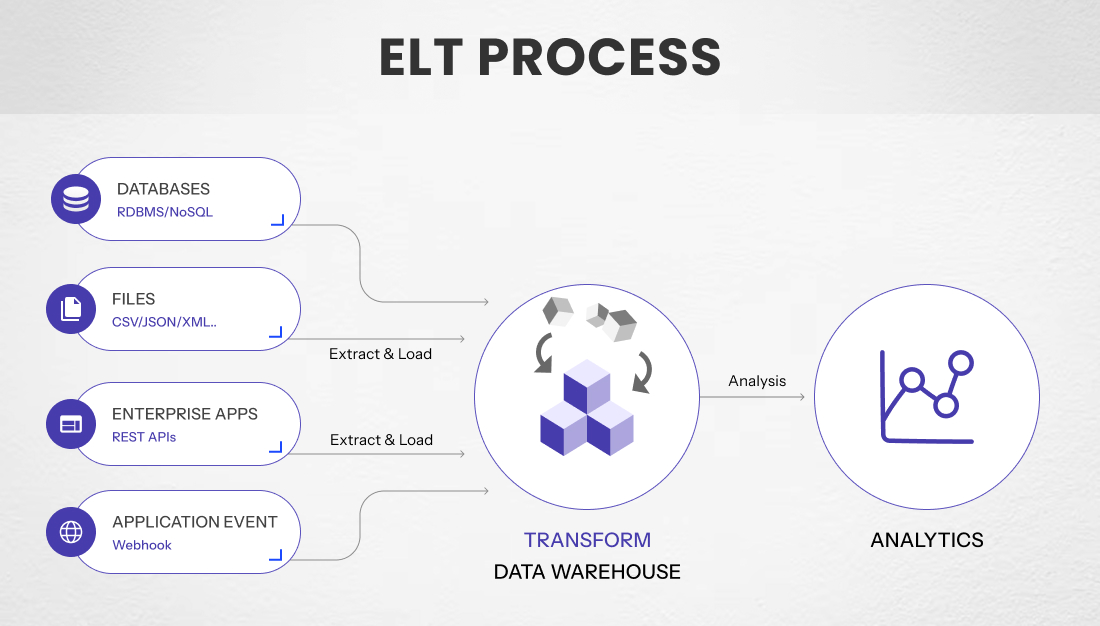

ELT flips the traditional model, and it’s made possible by the rise of cloud-native data warehouses like Snowflake, BigQuery, and Redshift.

In ELT, raw data is extracted and loaded as-is into the warehouse, and transformation happens later, within the warehouse itself. This model provides teams with more flexibility, faster access to raw data, and the ability to iterate quickly using tools such as data build tool(dbt)or native SQL transformations.

Why ELT is gaining ground:

Ideal for high-volume, semi-structured, or real-time data

Data becomes available almost immediately after ingestion

Empowers analysts and product teams to handle transformations; no need to wait on engineering

Scales well with modern, elastic cloud data infrastructure

But it comes with its own challenges:

Raw, sensitive data lives in the warehouse, which means strong governance and security are non-negotiable

Without disciplined transformation layers, things can get messy

Warehousing costs can spike if computing resources aren’t well-managed

Why It Mattered in Our Project

In our case, the client’s product needed to aggregate patient vitals from wearable devices, sync lab results, and reconcile insurance claims; all within a few hours. That kind of speed and flexibility simply wasn’t possible with a pure ETL model.

We ultimately adopted a hybrid strategy: using ETL for early-stage data that involved compliance-sensitive workflows, and ELT for everything else, especially the fast-changing, semi-structured data from APIs and medical IoT devices.

This experience taught us that ETL vs ELT isn’t a binary decision; it’s a balancing act. And making the right call starts with understanding your product’s data needs, team dynamics, and regulatory requirements.

Optimize Your Data Integration for Maximum Impact

Hire ETL engineers who can ensure reliable data integration and transformation, helping your business maintain clean, compliant, and analysis-ready data.

Choosing ETL, ELT, or Both: A Decision Framework for Leaders

As we learned in our healthcare project, there’s rarely a one-size-fits-all answer. The decision isn’t about which is better in theory; it’s about which is right for your specific use case, architecture, and growth stage.

Whether you’re building an MVP for a digital health startup or scaling a mature clinical analytics platform, here’s a framework we now use internally to guide that decision.

Start by Asking These Leadership-Level Questions:

1. What kind of data are we working with?

Structured, slow-changing data often favors ETL

Semi-structured or fast-moving data fits better with ELT

2. Who needs access to the data, and how soon?

Is real-time or near-real-time insight a product requirement?

Or can data be processed in scheduled batches?

3. Where are we applying transformations?

Are you anonymizing, validating, or enriching data before storage (compliance-sensitive)?

Or do your analysts need raw access for exploration and modeling?

4. How mature is our data infrastructure?

Legacy on-prem environments lean toward ETL

Cloud-native stacks with Snowflake/BigQuery are optimized for ELT

5. Do we have governance policies in place?

Can your warehouse securely hold raw sensitive data?

Is there clarity on who owns transformation logic, engineering, or analytics?

| Criteria | ETL | ELT |

|---|

| Data Sensitivity (e.g., PHI)

| Mask or transform before storage

| Raw sensitive data stored requires strong governance

|

| Real-Time / Streaming Needs

| Typically batch-based

| Ideal for near real-time ingestion

|

| Infrastructure

| On-prem or hybrid legacy environments

| Cloud-native architectures (e.g., Snowflake, BigQuery)

|

| Transformation Ownership

| Engineering-centric

| Analysts & product teams can self-serve

|

| Scalability | Needs heavy infra planning

| Scales flexibly with cloud computing

|

| Data Exploration

| Limited access to raw data

| Analysts can explore raw & transformed data

|

| Compliance Focus

| Easier to control pre-load data handling

| Needs robust controls & audit layers post-load

|

What This Means for Product Delivery, Cost, and Team Dynamics

The ETL vs ELT decision isn’t just a back-end technical detail; it affects how your teams build, ship, and scale your product. We saw this firsthand in our healthcare client’s project: the architecture we chose directly influenced how quickly we could deliver features, how flexible our teams could be, and how sustainably the system could scale without ballooning costs.

Let’s unpack the ripple effects.

Product Delivery & Time-to-Market

Choosing ELT allowed our client’s product teams to work faster and test features more independently. Since raw data was available in the warehouse almost instantly, analysts could build quick prototypes, and product managers could explore new use cases without waiting for dev sprints.

- ELT = Faster experimentation and shorter iteration cycles

- ETL = Longer cycles, since changes need coordination with engineering

For startups or product teams that iterate frequently, ELT offers a clear speed advantage. For large enterprises where data quality must be tightly controlled upfront, ETL may still be more reliable.

Streamline Your Data Workflows Efficiently

Hire data engineers who are proficient in building scalable data pipelines and managing complex ecosystems to ensure your data is always accessible and actionable.

Cost Efficiency (Short-Term vs. Long-Term)

At first glance, ELT might seem cheaper; after all, you’re leveraging pay-per-use cloud computing and skipping the need for dedicated transformation servers.

But we’ve learned that costs scale differently depending on your data usage patterns:

- ETL can be cost-effective for static workloads with minimal transformation

- ELT becomes cost-efficient when data volume is high, and transformation logic needs to evolve quickly

- But… ELT can become expensive if you don’t optimize warehouse compute (e.g., unused queries, uncompressed staging tables)

Leadership tip: If you’re going ELT, involve your DevOps or FinOps teams early. Cloud cost optimization needs to be part of the conversation from day one.

Team Collaboration & Ownership

This is one of the most underestimated dimensions, but one that deeply influences long-term product success.

- ETL pipelines are often controlled entirely by engineering teams. Business and product stakeholders have limited visibility into how data is transformed.

- ELT opens the door for analytics engineers, product analysts, and even PMs to contribute to transformation logic, especially if you’re using tools like dbt.

This shift in ownership led to faster insight delivery, fewer handoffs, and a sense of shared responsibility across roles.

We often say: ETL centralizes control. ELT decentralizes speed.

The Strategic Tradeoff

There’s no “free win” here; the trade-off is real:

| You're likely to gain...

| But you must be ready to manage...

|

|---|

| Speed & flexibility (ELT)

| Governance, cost control

|

| Centralized quality (ETL)

| Slower iteration, dev dependency

|

| Cross-functional collaboration (ELT)

| Training, process discipline

|

Conclusion: It's Not Just a Pipeline; It's a Product Decision

What started as a technical fork in the road, ETL or ELT, quickly became a strategic decision point in our healthcare project. The choices we made influenced everything from how fast our clients could roll out new features to how their teams collaborated to how securely they handled sensitive patient data.

We didn’t abandon one for the other. Instead, we built a hybrid pipeline that respected the needs of a regulated industry while unlocking the speed and flexibility of modern cloud data platforms.

And that’s the real takeaway for tech leaders:

The best data architecture isn’t the one that sounds best in theory; it’s the one that aligns with your product goals, compliance needs, and team capabilities.

For CTOs, CIOs, and Product Leaders:

–If you’re building a product that depends on data, especially in healthcare, don’t treat your pipeline strategy as an afterthought.

–Weigh not just the tools, but the people who use them, the data they need, and the pace your business wants to move at.

–And don’t be afraid to blend ETL and ELT where it makes sense. The smartest pipelines are rarely black and white.

Need a Second Opinion on Your Data Strategy?

We’ve helped healthcare and tech companies modernize their pipelines with the right mix of compliance, cloud, and collaboration.

If you’re building a data-intensive product and want to avoid costly rewrites later, we’re happy to share what’s worked (and what hasn’t) in the real world.

Let’s talk– your architecture decisions today are the foundation of your product’s growth tomorrow.