Quick Summary

Large Language Models (LLMs) are indeed what we can call the dynamite behind the ongoing AI revolution today. The advancements in LLM are reshaping the way businesses operate by offering multimodal capabilities in automation, decision-making, customer engagement, and other numerous aspects. This blog post highlights the top Large Language Models that businesses can utilize to gain a competitive edge. We will not only understand what LLMs are but also go through the list of best LLM models along with key factors to consider when choosing the right LLM model. Let’s dive in.

Table of Contents

Introduction

In January 2026, GPT‑5 received a major update that made it smarter, faster, and better at handling complex tasks. It can now follow longer conversations, provide more accurate answers, and work with text, images, and speech all at once.

But did you know that some open-source LLMs are quietly outperforming even the most popular models in specific areas?

For example, Gemini 2.5 Pro shines in reasoning-heavy research and enterprise decision-making, Claude 4 is great at long-context, multi-step planning, and Mistral Large delivers cost-efficient multilingual support without sacrificing performance. Models like DeepSeek-R1 and Qwen 3 are also gaining attention for tackling complex, data-intensive automation.

Choosing between top LLM models today isn’t just about popularity. It’s about finding the model that fits your exact needs, whether that’s multimodal content creation, advanced analytics, code completion, or real-time decision-making. The right model can save time, reduce errors, scale with your projects, and unlock capabilities that generic AI tools just can’t.

To make things easy for you, we have curated a list of best LLM models in 2026 and how businesses can use them to get the AI advantage. But before jumping into the list, let us understand LLMs and how they transform business operations.

What is a Large Language Model (LLM)?

A Large Language Model, or LLM, is an advanced AI system that can read, understand, and write text just like a human. It learns from huge amounts of data to recognize patterns in language, understand the meaning behind words, and generate answers or content that make sense.

You can use LLMs for things like translating text, summarizing documents, creating reports, or even writing marketing content.

Also, many of these LLMs can run directly on macOS, which means you can use them locally on your computer without relying on the cloud. This makes it easier to experiment, test ideas, and get results fast. With the right LLM, your business can save time, improve accuracy, and deliver smarter solutions for your customers.

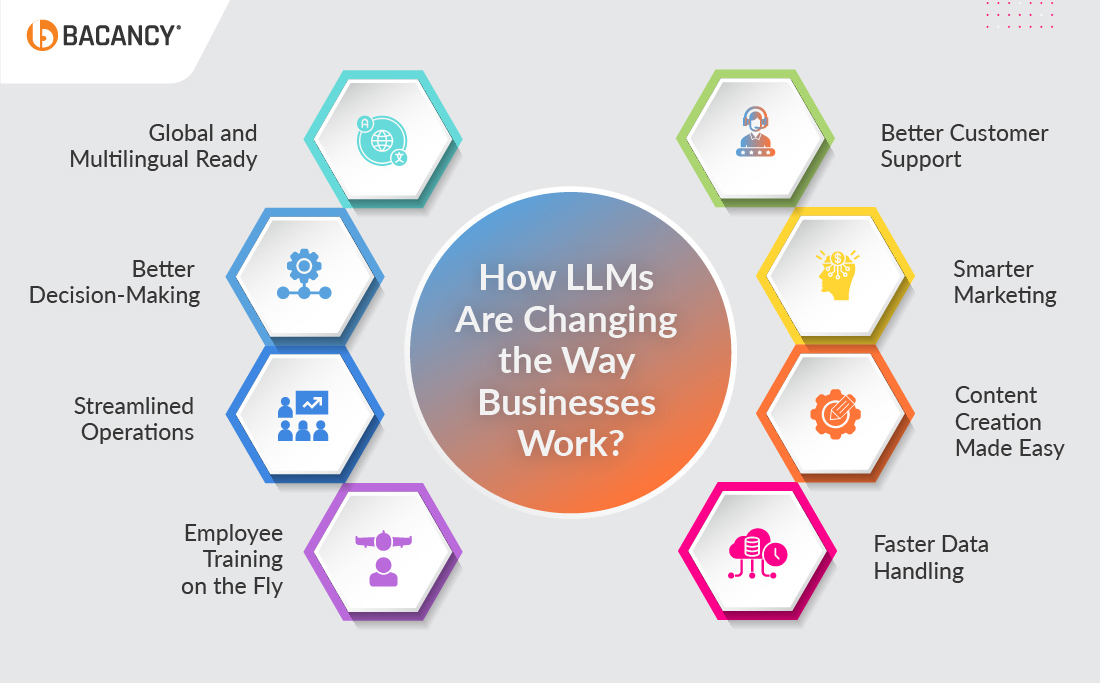

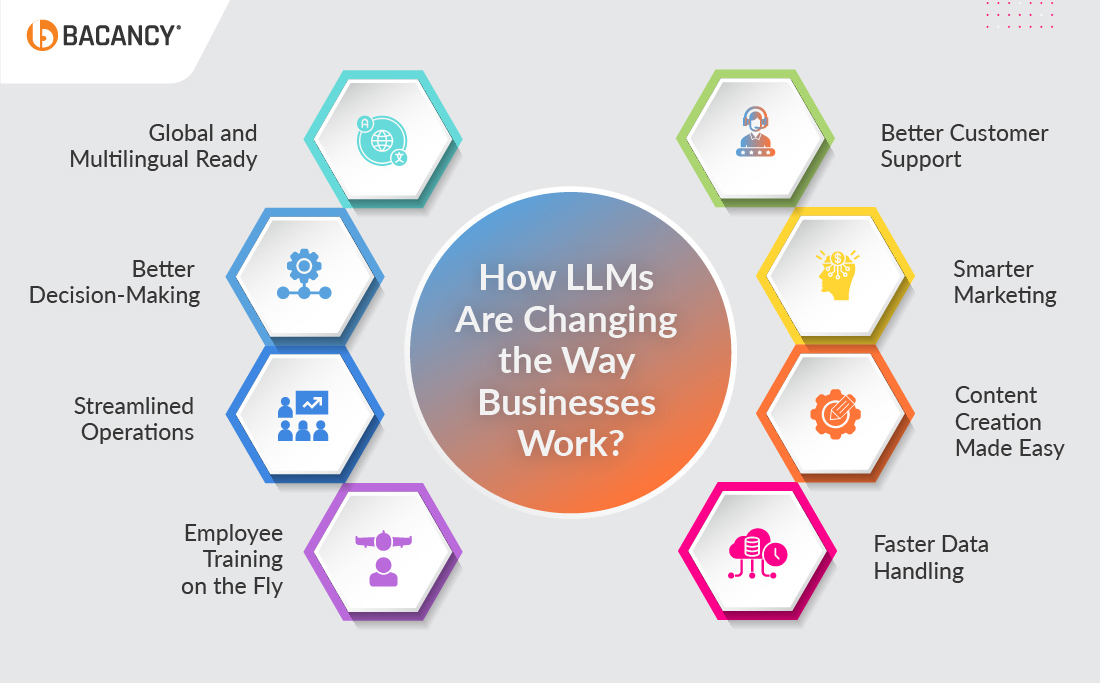

How LLMs Are Changing the Way Businesses Work?

LLMs today are not just about generating text. Models like GPT‑5, Gemini 2.5, Claude 4, and Mistral Large can handle huge amounts of data, automate tasks, and give insights that actually help businesses make smarter decisions. Companies are using them to do more than support work; they’re changing how work gets done.

- Better Customer Support: Instead of waiting in long support queues, LLMs can answer common questions instantly and accurately. Your team can focus on tricky issues, while AI handles the routine stuff.

- Smarter Marketing: LLMs can create emails, social media posts, and product recommendations that feel personal. This means higher engagement and better results without overloading your marketing team.

- Content Creation Made Easy: From reports to blogs, LLMs can help generate high-quality content fast. They can summarize long documents or give new ideas, saving hours for your team.

- Faster Data Handling: LLMs can read, organize, and analyze tons of information from emails, reports, or spreadsheets. That means faster decisions, fewer mistakes, and more insights from data you already have.

- Employee Training on the Fly: AI can guide employees, provide instant feedback, and even create personalized learning paths. Your team stays up to date without waiting for formal training programs.

- Streamlined Operations: LLMs can predict demand, optimize inventory, and make supply chains smarter. Companies using models like Claude 4 or Mistral Large are cutting costs and working more efficiently.

- Better Decision-Making: LLMs can spot trends, highlight risks, and give recommendations in real-time. Leaders get insights that help them make smarter, faster decisions.

- Global and Multilingual Ready: Modern LLMs work in multiple languages, making it easier for businesses to communicate with customers or teams around the world. No language barriers slowing you down.

How LLMs Are Changing the Way Businesses Work

Hire LLM engineers who can build, fine-tune, and deploy cutting-edge, powerful LLM models to automate operations and bring numerous benefits to your business.

15 Best LLM Models That Drive AI Excellence

From generating and interpreting images to translating and executing the action in the native language, LLMs are paving the way to transform industries with their advanced AI capabilities. Here, we have enlisted some of the best LLM models that enterprises can utilize to achieve their unique goals and facilitate growth.

| Top LLM Models | Developed By | Use Cases |

|---|

|

GPT-5

| OpenAI

| Next-gen AI for content creation

Content creation for blogs, articles, and social media

Research, and advanced reasoning across industries

|

| Copilot | GitHub | Code suggestions and completions for developers

Bug fixing and error correction in code

Automated documentation and comment generation |

| GPT-4.5 | OpenAI | Enhanced natural language understanding

Multi-turn conversations

Content generation and creative writing

|

| Gemini 2.5 Pro / Flash | Google DeepMind | Scientific research and advanced reasoning

Healthcare AI for diagnostics and patient data analysis

AI-based enterprise solutions for decision-making and automation |

| LLaMA 4 | Meta | Domain-specific fine-tuning for enterprises

Research and AI model development

Fast deployment of customized AI

|

| Mistral Large | Mistral AI | Business applications with open-weight models

Multilingual tasks and translation

Efficient reasoning for enterprise workflows

|

| DeepSeek-R1 | DeepSeek | Advanced reasoning-heavy tasks

Competitive AI research and development

Cost-optimized AI for enterprises

|

| Gemma | Google | Ethical AI applications in customer service and support

Conversational interfaces for apps and platforms

High-precision analytics for enterprises

|

| Claude 4 | Anthropic | AI alignment and ethics-driven solutions for business automation

Text summarization and analysis

Scalable AI integration for enterprise systems and workflows

|

| PHI 3 | Microsoft | Reasoning and inference for scientific research and data analysis

Language and vision task optimization

High-accuracy AI models for complex problem-solving and decision-making

|

| Qwen 3 | Alibaba Cloud | Multilingual AI applications

Coding support and programming tasks

Business intelligence and analytics

|

| Grok | xAI | Real-time customer engagement

Interactive social media experiences

Trend analysis and instant insights

|

| Falcon 3 | Technology Innovation Institute (UAE) | Cost-efficient enterprise AI solutions

Open-source NLP and NLU tasks

Scalable text processing for businesses

|

| Palm | Google | Enterprise-scale AI applications

Advanced reasoning and decision-making

Multimodal AI for text, vision, and more

|

| Bloom 6.1 | BigScience | Multilingual text generation and translation

Research and experimentation at scale

Community-driven AI development

|

GPT-5

GPT-5 is the latest addition to OpenAI’s Generative Pre-trained Transformer series and is considered one of the best LLM models for enterprise use. It delivers faster responses, stronger reasoning, and deeper contextual understanding than earlier versions. With support for text, speech, and image processing, GPT-5 works well across industries. Enterprises increasingly use GPT-5 for automation, research, analytics, and customer engagement at scale with reliability

Reasons to choose:

- Superior reasoning and contextual accuracy

- Faster, more efficient performance for enterprises

- Enhanced multimodal capabilities (text, speech, images)

GitHub Copilot

GitHub, as you all may know, is a renowned developer platform used by many enterprises and businesses to store, manage, and share their codebases. GitHub Copilot is an AI-powered coding assistant designed to help developers write, debug, and optimize code faster. It will be feasible to integrate GitHub Copilot with popular IDEs to get context-relevant suggestions and streamline software development. From smart code suggestions to automating numerous tasks, this intelligent LLM helps developers efficiently navigate through complex projects.

Reasons to choose:

- Real-time, reliable code suggestions

- Increases developer productivity with coding accuracy

- Supports multiple programming languages

- Seamless integration with GitHub and IDEs

GPT-4.5 (Orion) – OpenAI

OpenAI’s GPT-4.5 (code-name Orion) is the latest general-purpose model released on February 27, 2025. It builds on GPT-4/GPT-4o’s foundations with better emotional intelligence (EQ), more natural and fluid conversations, and improved accuracy, especially in summarization, content generation, and multilingual contexts. While it supports text and image inputs, it does not yet support features like voice mode, video, or screen sharing. Pro users can access it via ChatGPT, and there’s API support for things like function calling, structured output, streaming, and system messages.

Reasons to choose:

- Improved conversational nuance and empathy; understands emotional cues better.

- Stronger accuracy and reduced hallucination compared to previous versions, especially in multilingual tasks.

- Robust support for APIs (function calling, image input, structured outputs, streaming) for serious business/enterprise integration.

Gemini 2.5 Pro / Flash

Google DeepMind’s Gemini 2.5 Pro and Flash are the latest multimodal LLMs, offering stronger reasoning, faster performance, and efficiency improvements over earlier versions. With advanced context handling, these models support complex enterprise use cases, from customer interactions to large-scale content generation. Gemini 2.5 is tightly integrated across Google’s ecosystem, powering products like Gmail, Google Docs, and AI-driven search, making it one of the best LLMs for creative content support in business.

Reasons to choose:

- Enhanced reasoning and efficiency

- Multimodal capabilities with large context support

- Optimized for enterprise and real-time applications

- Strong integration with Google’s productivity tools

Meta Llama 4

Llama 4 is the next-generation family of open Large Language Models developed by Meta, available in variants such as Scout, Maverick, and Behemoth. These models push forward in reasoning, efficiency, and scalability, making them highly valuable for both research and enterprise adoption. Llama 4 continues Meta’s tradition of open access, enabling developers and researchers worldwide to experiment, fine-tune, and build advanced AI-powered applications.

Reasons to choose:

- Open-source with cutting-edge performance improvements

- Multiple variants tailored for different performance needs

- Cost-efficient compared to many closed-source alternatives

- Strong community support and rapid ecosystem growth

Mistral Large

Mistral Large delivers top-tier reasoning, code generation, and instruction-following performance, making it one of the best large language models for enterprise and developer use cases. It supports dozens of spoken languages and over 80 programming languages, including Python, Java, C++, and JavaScript. With a 128K-token context window, plus features like function calling and structured JSON outputs, it integrates smoothly with APIs, tools, and complex workflows.

Reasons to choose:

- Excellent multilingual fluency works well across many languages, not just English

- Strong in code, math, and reasoning benchmarks; reduces hallucinations by admitting uncertainty where needed

- Features like function calling and structured output (JSON) make it easier to build business-oriented tools and automate tasks

DeepSeek-R1

DeepSeek-R1 is a high-reasoning mixture-of-experts model and is quickly emerging as one of the best LLM models for complex problem solving. It has 671 billion total parameters, with only about 37 billion activated per query, which keeps it efficient without sacrificing power. With a 128K token context window, it handles long documents, advanced reasoning, code generation, and math tasks very well. Distilled versions also make it usable in lower-compute environments.

Reasons to choose:

- Excellent reasoning and problem-solving capability across domains like math, coding, and logical reasoning

- Handles long contexts well (128K tokens), which helps with tasks like summarization of large documents or workflows

- Distilled versions make it usable even with less powerful hardware, lowering cost and resource needs

Gemma 3

Gemma 3 is the latest generation of Google DeepMind’s open-model family, built on the same research powering Gemini 2.0. It supports both text and image inputs and comes in sizes of 1B, 4B, 12B, and 27B parameters, with context windows up to 128K tokens. The model is also capable of working across 140+ languages, making it a strong fit for businesses with global operations.

Reasons to choose:

- Handles long-context tasks effectively, ideal for summarization and document analysis

- Multimodal (text + image) capabilities for versatile applications

- Offers multiple sizes for flexibility across devices and infrastructure

Claude 4

Released in May 2025, Claude 4 includes Sonnet 4 and Opus 4, two models focused on improved reasoning, sustained performance, and better developer tools. Sonnet 4 is built for efficiency and everyday business tasks, while Opus 4 is designed for complex, long-running workflows, especially coding and agent-type tasks. Both models support text and image input.

Reasons to choose:

- Better at complex reasoning and planning, especially for long, multi-step tasks

- Opus 4 sets new benchmarks for coding performance; Sonnet 4 balances speed, accuracy, and cost

- Extended memory / continuity features: capability to remember local files, maintain context for longer periods, “thinking summaries” etc

Microsoft’s PHI

PHI is a group of small language models optimized for efficiency and strong reasoning—even in resource-constrained settings. Key variants include PHI-3, PHI-3-mini, PHI-3-small, PHI-3-medium, and PHI-3-vision (which also handles image + text). PHI-3-mini supports up to 128K token context length; PHI-3-small and PHI-3-medium scale up parameter size (7B and 14B, respectively) for higher complexity and reasoning tasks. These models are available via Azure AI Foundry, Hugging Face, and Ollama.

Reasons to choose:

- Provides accurate and reliable insights based on data

- Excels in everything, from basic reasoning to vision analysis

- Microsoft-branded MIT License

- Designed for optimal performance for small models

Qwen 3

Qwen 3 is a hybrid reasoning model developed by Alibaba Cloud with dense and Mixture-of-Experts (MoE) architectures. It offers variants from 0.6B to 235B parameters (with MoE models activating smaller subsets per query). It supports two modes of thinking and non-thinking, so simpler tasks are faster, and complex tasks receive deeper reasoning. Qwen 3 excels in multilingual tasks (119 languages), logic, and coding, making it useful for both enterprises and research.

Reasons to choose:

- Strong performance in math, coding, logic, and reasoning

- Flexible “thinking” and “non-thinking” modes balance speed vs depth

- Broad multilingual and translation capabilities

- Open-weight release, easing integration and experimentation

Grok 4

Created by xAI, Grok 4 is the latest flagship model and is available in standard and “Heavy” (multi-agent) variants. It supports massive context windows (≈ 256K tokens), strong reasoning, coding capabilities, and real-time tool integration. Variants like Grok 4 Code are tailored for programming, while structured outputs and function calling make it highly practical for enterprise adoption.

Reasons to choose:

- Exceptional reasoning in math, coding, and logical workflows

- Very large context window for long documents and conversations

- Variants tailored for coding and agent-style use cases

- Supports function calling and external tool integration

Falcon 3

Falcon 3 sets itself apart with strong multimodal capabilities, seamlessly processing text, images, audio, and video. Created by the UAE’s Technology Innovation Institute (TII), this model family offers multiple sizes: 1B, 3B, 7B, and 10B, along with Base and Instruct variants. Designed for both open-source and enterprise use, Falcon 3 is optimized for lightweight infrastructure such as laptops while maintaining high performance with a ~131K token vocabulary and 32K context length.

Reasons to choose:

- Multimodal capabilities across text, image, audio, and video

- Runs efficiently on lighter hardware with quantized versions

- Improved reasoning, instruction following, and fine-tuning

- Open-source and enterprise-friendly with lower deployment costs

PaLM 2

More than just an upgrade, PaLM 2 represents Google DeepMind’s push toward smarter, faster, and more versatile LLMs. With support for over 100 languages, it goes beyond translation by capturing cultural nuances, idioms, and context. Its refined reasoning abilities make it particularly effective in fields like math, science, and software development. Unlike its predecessor, PaLM 2 achieves this while being more compute-efficient, delivering faster inference at lower costs. Today, it quietly powers a range of Google products, proving its strength as an enterprise-ready solution.

Reasons to choose:

- Excels in logical reasoning, math, science, and coding tasks

- Handles nuanced multilingual communication

- More efficient scaling for cost-effective performance

- Integrated into widely used Google services

Bloom 6.1

Recognized as one of the most transparent and community-driven LLMs, Bloom 6.1 pushes the boundaries of openness in AI. The new update strengthens multilingual accuracy, enhances robustness across reasoning tasks, and expands generalization for diverse use cases. With its open license, Bloom 6.1 remains a trusted choice for researchers, developers, and organizations seeking a scalable, vendor-free AI solution.

Reasons to choose:

- Open-source with unmatched transparency and collaboration

- Advanced multilingual and cross-domain performance

- Cost-efficient for tasks like summarization, translation, and text generation

- Reliable choice for both experimentation and production environments

Conclusion

In conclusion, the power of Large Language Models (LLMs) is undeniably reshaping the AI industry. By going through our curated list of the best LLM models, we are sure that you will have a better understanding of how different LLMs can be used and which one is perfect for your project goals. As LLMs continue to evolve, businesses need to evolve as well, and that can be possible by adopting the right LLM model to stay competitive in the AI-driven marketplace. If you, too, want to experience and utilize the true potential of LLMs in your business, partnering with a trusted LLM development company can ensure you achieve long-term success.

Frequently Asked Questions (FAQs)

Large Language Models or LLMs are advanced AI systems designed to understand and generate human-like text. These intelligent models are pre-trained based on massive amounts of datasets to execute the commands through their natural language processing capabilities. These LLM models, such as GPT-4 and Gemini, are capable of tasks like content creation, answering questions, language translation, and more.

Well, choosing the right LLM depends on your specific project or business needs or use case. For content generation, OpenAI’s GPT models are a great choice, while you can select GitHub Copilot if you need code assistance. You can consider factors like accuracy, scalability, and integration capabilities when selecting the best LLM model that fit for your needs.

LLMs are designed to be integrated with other supportable tools and systems through APIs or custom applications. There are several top LLM models, such as GPT-4 and BERT, that offer seamless integration options to automate workflows, enhance customer support, or streamline business operations.

Numerous industries such as healthcare, finance, retail, education, eCommerce, gaming, insurance, and others can gain significant advantages from utilizing LLMs. They can use LLMs to automate processes, improve customer interactions, analyze large datasets, generate high-quality content, and drive valuable insights for decision-making.

To get the most out of these best LLM models, small to mid-sized businesses can use optimization tools like Hugging Face Transformers with AutoGPTQ or Axolotl. These make models faster, cheaper, and easier to fine-tune for your needs.

Yes, enterprises need models they can rely on. LLMs like GPT-5, Claude 4, Mistral Large, and Gemini 2.5 shine because they deliver accurate responses, handle real-world business tasks well, and are trusted for consistent results. These models balance performance with reliability, making them great choices compared to general-purpose LLMs.